Small Business Bonus Scheme: evaluation

This report presents the results of an evaluation of the Small Business Bonus Scheme (SBBS), and provides recommendations in relation to the SBBS and non-domestic rates relief more broadly.

2. Our approach to evaluating the SBBS

2.1 Methods for evaluating policies

There are various methods of policy evaluation, each with their own strengths and limitations. The method best suited to evaluation will vary between policies and even across evaluation outcomes within the same policy. The goal of impact evaluation is to provide the best evidence possible on the various potential impacts of the policy, given its design, the available data, and the evidence. That is not to say that all evidence should be considered as equally reliable in uncovering the impact of a policy in a particular area.

Some forms of qualitative evidence – such as survey or interview questions asking people about their opinion of a policy – if used on their own, typically rank at the lower-end of the reliability scale within economic evaluations, primarily because they rely on subjective judgment and/or reporting of outcomes and experiences. One reason for this is that participants can believe (in some cases correctly) that the benefits they derive from a policy might be affected by the result of the evaluation, and so answer on this basis. This is not to say that that these types of evidence cannot provide valuable insight into individuals' experience of a policy, how it has affected them personally, or how it works in practice. Rather, it is to say that the purpose of any economic evaluation is to shed light on the 'true' economic impacts of a policy, over and above qualitative evidence and individual opinions on its strengths and weaknesses.

To understand these wider economic impacts, methods that rely on quantitative analysis of data are typically preferred. These quantitative methods often exploit administrative and other data sources and utilise econometric methods to define and estimate a relevant policy effect. There are a range of these methods available, the most appropriate in a given setting being determined principally by the design of the policy itself and the data available. Among quantitative methods there are still large differences in the degree of reliability, however.

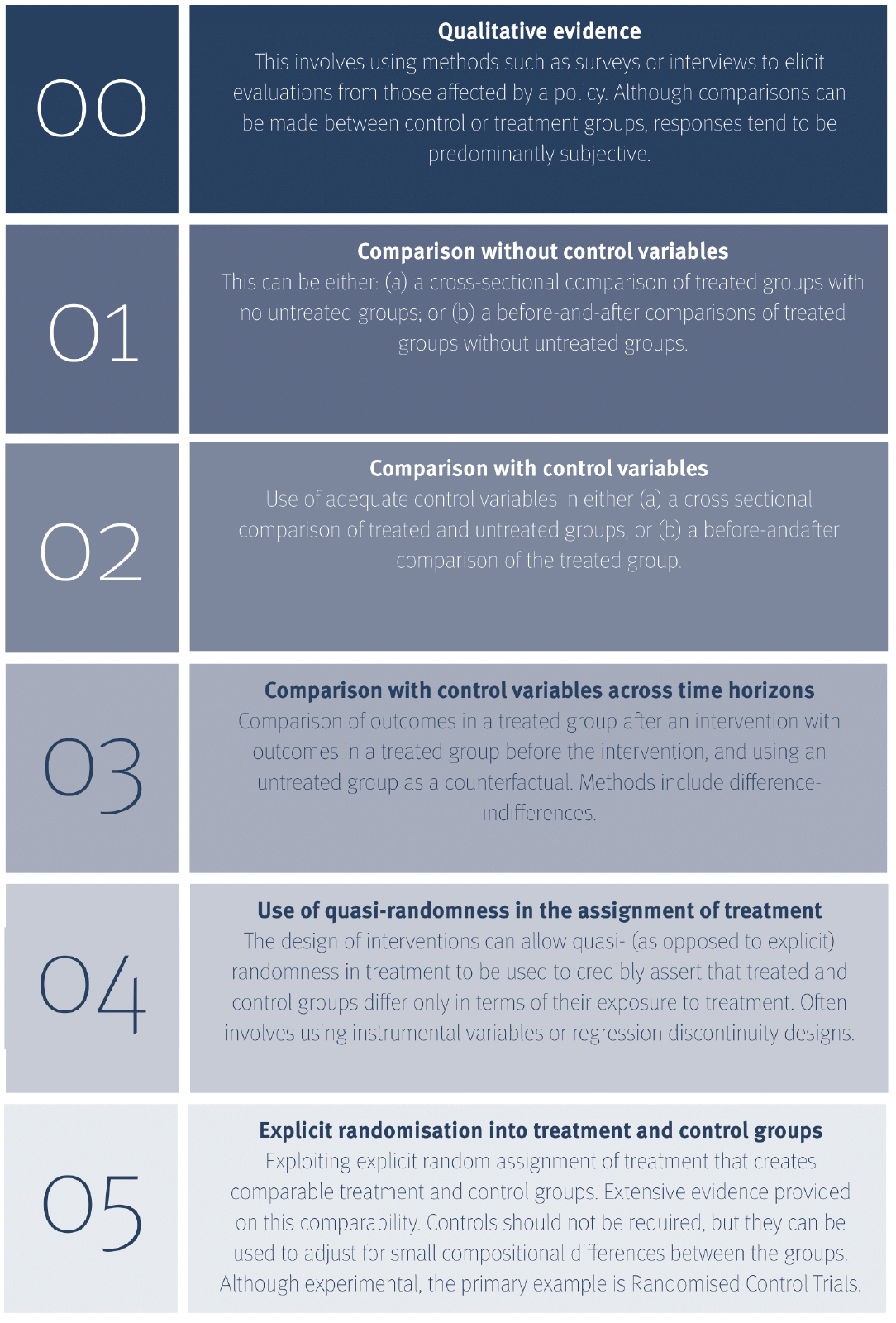

Understanding the level of credibility attached to each type of evidence is crucial for effective policy development. The Economic and Social Research Council (ESRC) and UK Government funded "What Works Centre for Local Growth" has recently been established with the specific aim of providing a framework through which policymakers can evaluate the evidence on the effects of a policy.[12]

Source: What Works Centre for Local Economic Growth and Fraser of Allander Institute.

Their five-point scoring scale focuses on evidence from evaluations seeking to understand the effect of economic policies, on average, on the outcomes of all of those it affects/affected.[13] They define Level 1 – the lowest level of robustness – as simple cross-group comparisons of those exposed and not exposed to a policy. We have added a base level of zero to represent the robustness of some forms of qualitative evidence in this type of impact evaluation, particularly where there is a need to elicit responses to the effectiveness of a policy respondents are in receipt of.

Again, this is not to say that qualitative evidence does not provide important insights into individuals' experiences of a policy. In particular, qualitative evidence is often the only basis allowing the generation of explanations for why policies do or do not work as intended. As we explain in the following subsection, we explicitly use qualitative evidence as part of our evaluation method to understand how business owners have interacted with the SBBS. Rather, placing certain forms of qualitative evidence at the bottom of this economic evaluation evidence scale highlights that it cannot reliably be used to rigorously understand the large-scale effect an economic policy has on the target outcomes of those that it affects: in the example of evaluating the effect of the SBBS, avoiding subjectivity in survey responses is very difficult indeed, and small sample sizes combined with endogenous response rates create difficulties in extrapolating the impact on business growth or survival among the broader business base.

2.2 The three components of our evaluation

No single evaluation method in Figure 2.1 will provide a complete picture of the impact of a policy. Instead, where possible methods should be combined in order to gain as broad an understanding as possible of how the policy has affected those most exposed to it. In this section, we set out how we used different elements of Figure 2.1 to undertake an evaluation of the SBBS. We begin by describing the data that were made available to us, before setting out the key questions we were asked to address. We then explain our methodological choices.

We were given access to two main data sources (a full explanation of which is included in Section 3). The first of these were property-level data on characteristics such as rateable value (RV), location, business type, and non-domestic rates (NDR) reliefs received. These were available for all non-domestic properties in Scotland between the years 2009 and 2020, but contained no information on business level outcomes, such as turnover.

Second, we were given access to administrative data on business outcomes such as turnover and employment, which are collected through official business surveys. These data were obtained through the ONS matching property level information to UK Government administrative records, and, for the reasons we explain in detail in Section 7, were only available for a sub-sample of businesses in Scotland.

Specifying a methodology also depends on the objectives of the policy evaluation. As we outlined in the introduction to this report, there were three stated objectives of the SBBS evaluation:

a) understand who is getting the relief;

b) assess the impact of the scheme on relief recipients and identify wider benefits and costs; and

c) consider whether the current scheme could be improved.

Given these objectives and the data made available to us, we chose an approach to evaluating the SBBS that used three methods:

1. a descriptive analysis of data on all non-domestic properties and all SBBS recipients (objectives a, c);

2. an econometric analysis of data on business outcomes to try and uncover the causal effect of the SBBS on the business outcomes of its recipients (objectives b, c); and

3. a survey of small businesses to understand the wider impacts of the SBBS on business owners (objectives a, b, c).

Method 1 relies solely on the property-level data, and method 2 on the matched administrative data. Given they are both quantitative, we augmented these two approaches with a survey of small businesses (method 3) to provide both additional self-reported business level data and an insight into business owners' subjective experiences of the SBBS. This use of different methods in this way is also considered good practice to provide a further check of robustness, so that conclusions are not drawn based upon the findings of one particular methodology.

Table 2.1 details the research objectives we set out to address, and where these are considered in this report. Section 4 contains our descriptive analysis, Section 6 the analysis of the survey results (the design of which is outlined in Section 5), and Section 7 contains our econometric analysis.

Table 2.1: Research objectives and where they are addressed in this report

To understand…Section of the report

which regions and property types benefit most from SBBS relief - Sections 4 and 6

whether eligible/recipients receive other support and from which schemes - Sections 4 and 6

how take-up of the SBBS differs across rateable value, property types, and location - Section 4

the non-domestic propertybase in Scotland - Section 4

how the non-domestic property base has changed over time - Section 4

how take-up of the SBBS has changed over time - Section 4

the relationship between rateable value and business size - Sections 6 and 7

whether there has been capitalisation by landlords - Section 6

whether the SBBS supports activities like investment and paying the Real Living Wage - Section 6

whether the SBBS supports better business outcomes in terms of output and employment - Sections 6 and 7

the indirect effects of the SBBS on its recipients e.g. potential disincentives to improve the property under occupation, or to move to a larger property - Section 6

whether the SBBS is helping small businesses survive - Section 6

the awareness of the SBBS among businesses - Section 6

whether the SBBS supports town centres - Sections 4 and 6

2.3 The evaluation timeline and its interaction with the Covid-19 pandemic

This evaluation began in the summer of 2019, with the results originally scheduled to be published in spring 2020. Unfortunately, there were several events outside of our (or the Scottish Government's) control over the course of 2019 and, most importantly, 2020, that delayed or limited our analysis.

Table 2.2 presents the original timeline for this evaluation and compares it to the eventual timeline, highlighting the key events leading to delays.

The Covid-19 pandemic and ensuing national lockdown was the primary reason for the eventual end date of the evaluation being pushed back. Over and above the delay caused through a disruption to working patterns, two major stages of the pandemic response coincided with the completion of two stages of our evaluation, limiting our ability to carry them out as originally planned.

First, the outbreak of Covid-19 and various announcements and responses to it aligned with the period in which our business survey was in the field. This meant that the surveys reached small business during a period in which many were subject to various lockdown restrictions and were also facing unprecedented financial challenges.

In addition, both the UK and Scottish Government supported small businesses during this time through NDR relief. This brought the importance of NDR to businesses' ability to operate to the attention of the public, and business owners, perhaps to a greater extent than it had been in the past. Together, these meant that any survey responses received could not necessarily be relied upon to provide an accurate representation of businesses' outcomes or experience of the SBBS prior to the pandemic.

Second, Part 2 of our evaluation, the econometric analysis, relied upon access to data that could only be accessed from a secure computer terminal. This is to protect the confidentiality of any business data used in the analysis. In our case, this was located in the Scottish Government building in Atlantic Quay, Glasgow. The process for gaining access to the data in this way is lengthy, and progress to access the data depends on the capacity of the ONS (the organisation that controls access to the data) as well as the number of other researchers applying for similar access.

From March 2020, we could no longer access Atlantic Quay given Covid-19 restrictions. We explored two alternative methods of accessing the data: accessing it remotely through secure terminals in the University of Strathclyde, or doing the same but from home. Both of these required putting in place additional security agreements and protocols. For technical reasons, we focused primarily on pursuing access to the data through secure terminals in a University building. Doing so, however, depended on our ability to access the relevant buildings given the various government guidelines that were in place for travelling to work, as well as University policy on how building and offices could be used.

Further complicating data access, the ONS experienced an enormous increase in workload, including increased demand for remote access to secure locations. This slowed the process down considerably.

We were able to access both the data and the appropriate university building within government guidelines in November 2020 for two days per week between the hours of 8am and 6pm. Originally, we had planned that this would allow us to work on the econometric analysis over November and early December 2020 to produce a first draft. This could then be discussed with the Scottish Government, and subsequent changes made for a revised draft at the beginning of 2021. Glasgow was placed in a tier 4 lockdown as of 20 November 2020, however, and the University closed all buildings for anything but essential access as per government guidelines. Fortunately, given that this project relied on data that could not be accessed from home and was working to a deadline before Christmas, we were able to apply for special access. Special access for two days per week was granted in the first week of December until 17 December 2020, when the University closed for Christmas.

During this time, we produced an initial draft of an econometric analysis. This draft was first cleared through the ONS's processes for the vetting of statistical output from the secure data lab, before we were able to share it with the Scottish Government for discussion. Our plan was that we would be allowed back into the university building in January to access the secure terminal and produce a final draft incorporating points from both this and internal discussions. However, Scotland was placed in a stricter lockdown from 4 January 2021, meaning we were prevented from accessing the University from this date and could therefore not undertake any further econometric work.

After discussing the draft econometric analysis with the Scottish Government, the latter having shared it with an internal peer review group, it was decided in mid-February that the benefits from the potential insights that could be gained from additions to the econometric analysis outweighed the cost of delaying the project further to re-gain access to the data. This decision was primarily based on the Scottish Government realising as part of the review process that data they hold on draft valuations could perhaps add significant value to this portion of the evaluation. As a result, completion of our econometric analysis was delayed further until the new data brought forward by the Scottish Government were uploaded to the secure setting and we were able to either: (a) access the University of Strathclyde; or (b) gain home access to the ONS secure data.

Resumption of this work through the former depended entirely upon the lifting of Covid-19 restrictions, over which there was a great deal of uncertainty. As of mid-February, there was no indication that the Fraser of Allander team would be able to gain access to the University buildings until the summer of 2021. We therefore intensified our focus on gaining home access to the data.

Home access was obtained in April 2021, however the new data were not uploaded into the secure setting until June. It was agreed with the Scottish Government that the work in this part of the evaluation would therefore resume after other commitments the Fraser of Allander team had during the months of June, July, and August were complete. Work therefore finally resumed on the econometric analysis in late August, and a final draft of this – and the full report – was submitted to the Scottish Government for review in September 2021.

| Action | Original date | Delayed date | Reason for delay |

|---|---|---|---|

| Project Commencement | June 2019 | ||

| Econometric analysis | February 2020 | September 2021 | Delay to data access; Covid-19 pandemic; additional data provided by Scottish Government |

| Descriptive Analysis | September 2019 | ||

| Survey Pilot | August 2019 | November 2019 & January 2020 | Delays to survey development; requirement for a second pilot |

| Survey Launch | September 2019 | March 2020 | Refinement of survey following two pilots; avoiding Christmas and the 2019 general election |

| Survey Close | October 2019 | April 2020 | |

| Survey Analysis | January 2020 | September 2020 | Delays to survey launch; access to paper survey responses delayed due to Covid-19 pandemic |

| Draft final report | February 2020 | February 2021 | Combination of the above delays |

| Draft final report with additional econometrics | September 2021 | ||

| Final report | March 2020 | December 2021 |

Contact

Email: ndr@gov.scot