Developing the Young Workforce (DYW) regional groups: formative evaluation

External evaluation to examine the early operations of four of the most established Developing the Young Workforce (DYW) regional groups. The study was carried out between December 2017 and April 2018.

3. Methodology

Introduction

3.1 This chapter reports on the evaluation methodology. It begins with an overview of the three main stages involved, followed by details of the approach taken to each. It concludes with an assessment of the relative strengths and weaknesses of the approach, as well as the lessons that can be learned to inform future evaluation activity – both for the DYW Regional Groups and the wider DYW policy agenda.

Overview

3.2 The evaluation focussed on four of the 21 DYW Regional Groups – Ayrshire; Edinburgh, Midlothian and East Lothian; Inverness and Central Highland; and North East. These Groups were pre-selected by the Scottish Government to ensure a mix of newer and more mature groups, urban and rural areas and different approaches taken to delivering DYW activity. All four Groups are hosted by local Chambers of Commerce.

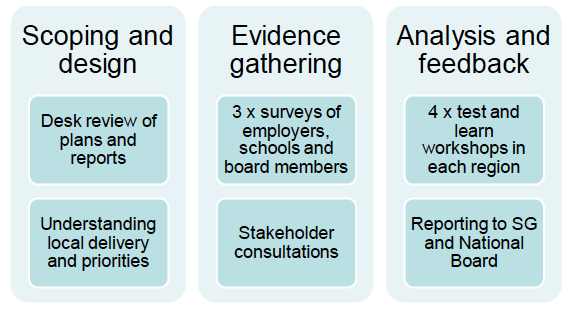

3.3 The evaluation was carried out between December 2017 and April 2018. There were three main stages involved, as set out in Figure 3‑1. The sections that follow report on the specifics of the activities associated with each stage.

Figure 3‑1: Overview of methodology

Source: SQW

Scoping and design

3.4 The first stage of the evaluation involved developing an understanding of the key priorities and activities of the four Regional Groups. This was achieved through:

- A desk review – of background documentation pertaining to the four Regional Groups included within the evaluation. This included grant award letters and KPI / progress reports submitted to the Scottish Government.

- Scoping consultations – with national and regional stakeholders, including members of the DYW National Group and the Chairs, Executive Leads and a selection of board members of each of the four DYW Regional Groups[4].

3.5 The findings from the scoping stage were reported to the Scottish Government in January 2018. This was followed by a session with the DYW Measuring Impact Working Group in early February to discuss the key messages and agree on the approach to the evidence gathering stage, including the design of the research tools.

Evidence gathering

3.6 The evidence gathering stage was designed to capture both breadth and depth of feedback from stakeholders involved with the DYW Regional Groups. This was done through:

- Online surveys – three surveys were developed to gather feedback from Regional Group Board Members, schools and colleges and employers that had engaged with the Regional Groups[5].

- In-depth consultations – the surveys were followed up with one-to-one consultations with a selection of employers, schools and wider partners and stakeholders identified as having engaged with the DYW Regional Groups[6].

3.7 It was agreed at the scoping stage that the online surveys would be distributed by the Regional Groups rather than the SQW evaluation team. The rationale was two-fold: to mitigate any data protection issues associated with sharing stakeholder contact details; and that people would be more likely to respond to a request from a known contact.

3.8 SQW prepared the online surveys and shared these with the Executive Leads for each the four Regional Groups on 16 February 2018, along with covering emails and suggested text and instructions for issuing reminders. The surveys remained open for just over two weeks, closing on 6 March 2018.

3.9 The Executive Leads were asked to issue the surveys to all schools, colleges and employers that had engaged with the Regional Group, as well as all Regional Group Board Members. Table 3‑1 shows the total number of responses received and Table 3‑2 shows the associated response rates[7].

Table 3‑1: Total survey responses

| Regional Group | Regional Group Board Members | Schools / Colleges | Employers |

|---|---|---|---|

| Ayrshire | 6 | 33 | 127 |

| Edinburgh, Midlothian & East Lothian | 7 | 20 | 17 |

| Inverness & Central Highland | 8 | 10 | 39 |

| North East | 5 | 8 | 48 |

| Total (four Groups combined): | 26 | 71 | 231 |

Source: SQW

Table 3‑2: Survey response rates

| Regional Group | Regional Group Board Members | Schools / Colleges | Employers |

|---|---|---|---|

| Ayrshire | 86% | 67% | 17% |

| Edinburgh, Midlothian & East Lothian | 47% | 47% | 6% |

| Inverness & Central Highland | 62% | 63% | 31% |

| North East | 38% | 28% | 6% |

| Total (four Groups combined): | 54% | 52% | 12% |

Source: SQW

3.10 The surveys were followed up with 25 in-depth consultations with a selection of employers, schools and wider partners and stakeholders that had engaged in DYW activities organised through the Regional Groups[8]. The consultees were nominated by the Executive Leads for each of the groups.

3.11 The survey response rates for Regional Group Board Members and schools / colleges were generally high across all areas and so it is safe to assume that the results are robust and representative. However, the response rates were much lower for employers across all areas, and were particularly low in the North East and in Edinburgh, Midlothian & East Lothian. Possible explanations for this were that:

- Employers in the North East received the survey one week later than those in the other regions and so had less time to complete it (one and a half weeks). Moreover, the weblink to the survey was embedded within a newsletter rather than in a standalone email and so it is possible that some did not see it.

- Employers in Edinburgh, Midlothian & East Lothian were reported to have recently been invited to participate in two other online surveys distributed by the Regional Group and so it is possible that they were suffering from 'survey fatigue'.

Analysis and feedback

3.12 The findings from the surveys were analysed and reported back to each of the four groups via a series of two-hour workshops with Regional Group Board Members[9]. The format of the workshops involved SQW reporting back on the headline findings for the region, relative to the average for the four regions combined, and facilitating a discussion around these. The Regional Groups each received a slide pack with the survey findings for their region following the workshop.

Reflections on approach

3.13 A key strength of the evaluation is that it gathered both a breadth of perspectives, through the online surveys, as well as in-depth feedback through the one-to-one consultations. In addition, the regional workshops provided the opportunity for Board Members to review and reflect on what was going well and where challenges remained. These discussions helped to strengthen the analysis and interpretation of the evaluation findings.

3.14 However, there were some limitations. These mainly relate to a lack of control on the part of the evaluation team to recruit participants – both for the surveys and the consultations. A further (related) limitation is that feedback was only invited from those employers, schools, colleges and partners who had actively engaged with the Regional Groups. This limited the scope for exploring the barriers faced by those who had not engaged. Combined, these factors point to an element of positive bias in the evaluation findings.

3.15 A further important limitation of the evaluation is that it did not incorporate feedback from young people. This was raised as a concern at the scoping phase, and in one of the regional workshops, but was beyond the scope of the current assignment. We understand that discussions are underway around potential options for addressing this.

3.16 Another issue raised at various points throughout the study was a general lack of clarity on the specific role and contribution of the Regional Groups amongst the increasingly crowded landscape of initiatives aimed at improving the employment outcomes of young people, many if which relate to the wider DYW programme. The result was that some consultees were not clear on 'who had done what' and so struggled to comment on the effectiveness of the Regional Groups, or to attribute change to their activities.

3.17 The key lessons that can be learned to inform future evaluations – both of the DYW Regional Groups and the wider DYW policy agenda – relate to:

- Access to stakeholder contact details – a more robust approach would have involved the evaluation team having access to contact details for employers and schools in order to recruit participants directly. For this to be possible in future, the Regional Groups would need to request permission from the employers and schools they are working with for their contact details to be shared. Alternatively, if Marketplace is to be rolled out nationally, this could provide a potential route to accessing contact details. Although, again, permission would need to be sought for them to be used for the purposes of research / evaluation.

- Inclusion of non-participants – future evaluations should consider how best to include employers, schools and local / regional partners that have not engaged in DYW activities. This would provide a more balanced view of how well or otherwise the Regional Groups are achieving their objectives. It would also generate valuable insights into the barriers faced by different stakeholder groups to engaging in this type of activity, as well as potential routes to overcoming these.

- Engaging young people – the aim of DYW policy agenda is to improve the labour market and employment outcomes of young people. It will therefore be essential for any future evaluation to incorporate feedback from young people themselves. This is the only route to fully understanding how the range of activities being funded and delivered through this policy agenda are having an impact

- Clarity on the specific role and contribution of the DYW Regional Groups – it can be challenging for evaluation participants to isolate the activities and associated outcomes / impact of a single initiative, particularly when the 'brand' sits within a wider programme of activity (such as DYW). In future, consideration should be given as to: how far evaluation should focus on one element of the wider DYW programme; and whether the activities of the Regional Groups can be clearly described to assist evaluation participants to feedback on these.

Contact

Email: Adrian Martin