Attainment Scotland Fund Evaluation: School Survey Report, 2025

The Report presents the findings from a school survey in relation to the Attainment Scotland Fund undertaken in spring 2025. The survey explored the views of a range of school-based staff on approaches, perceptions of impact of the Fund on the poverty-related attainment gap, and sustainability.

Appendices

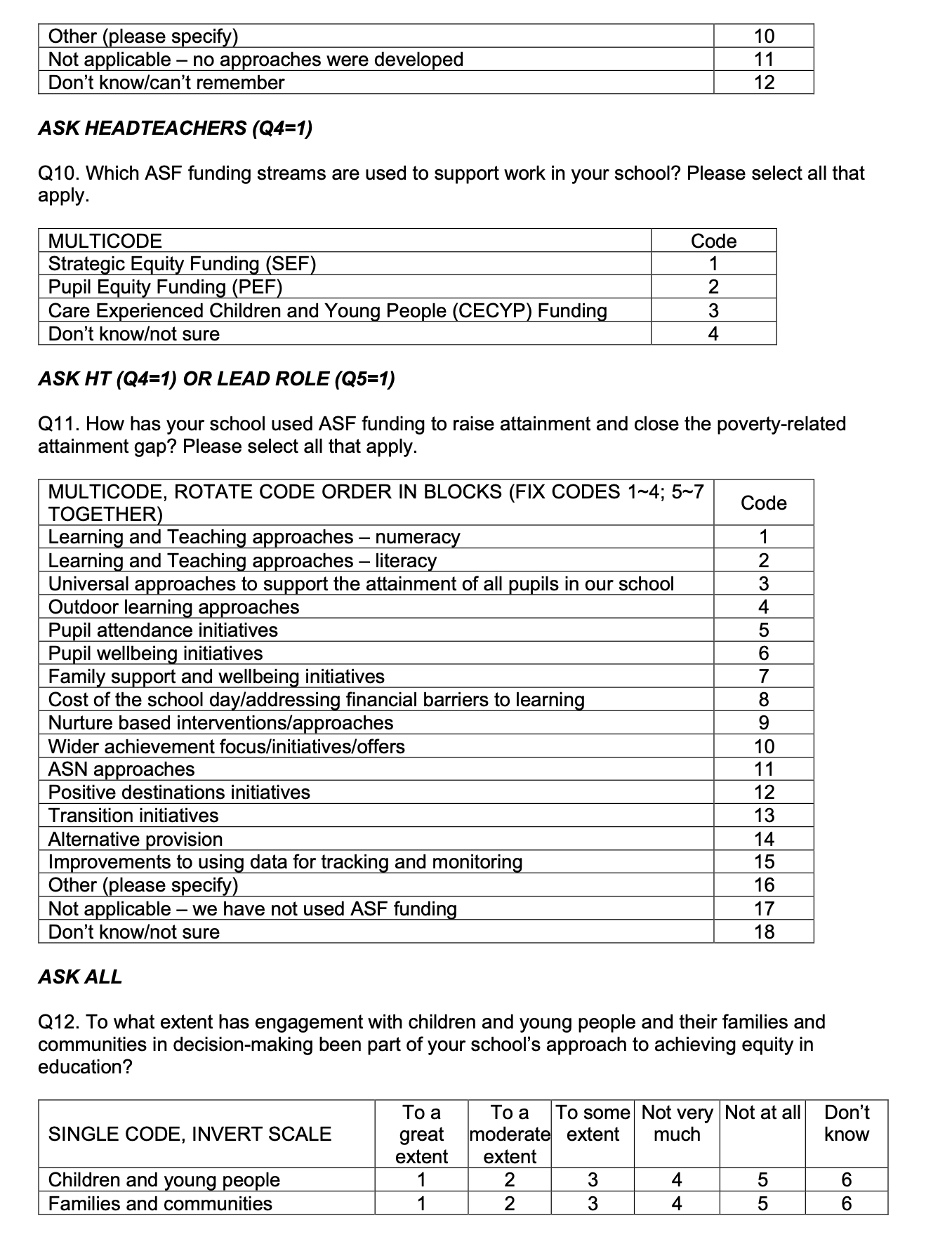

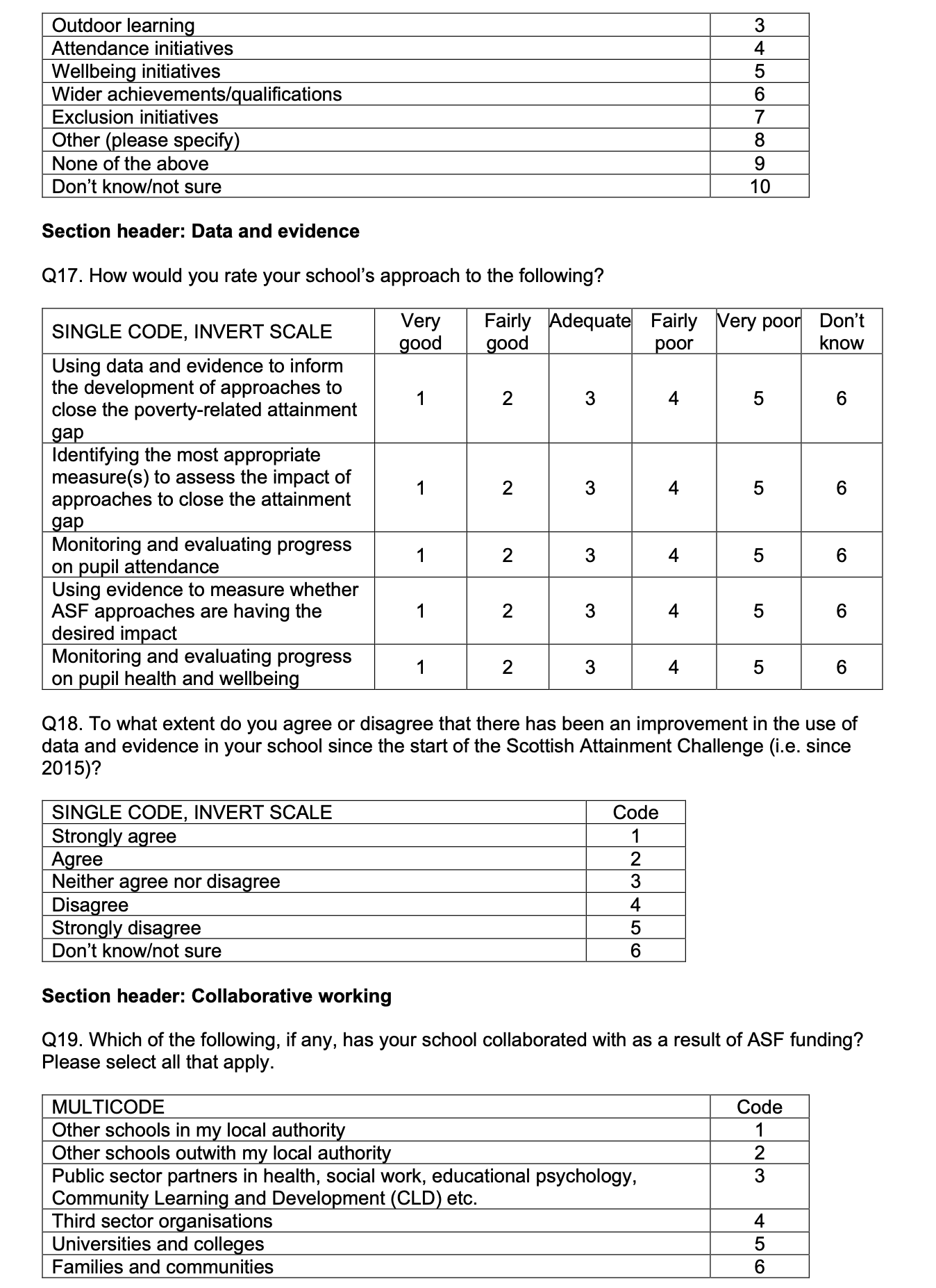

Appendix 1: Survey questionnaire

Survey title: ASF Evaluation Survey

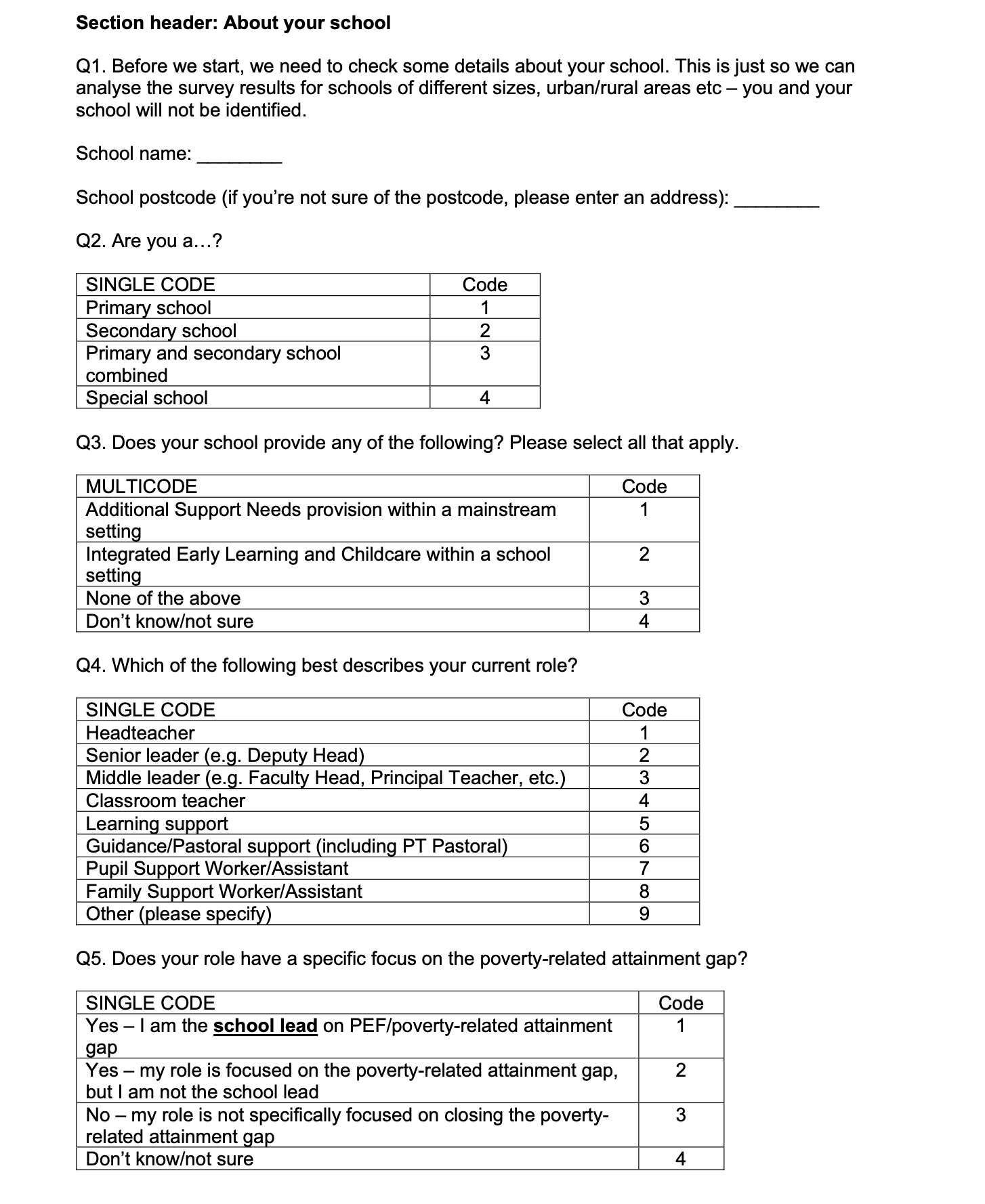

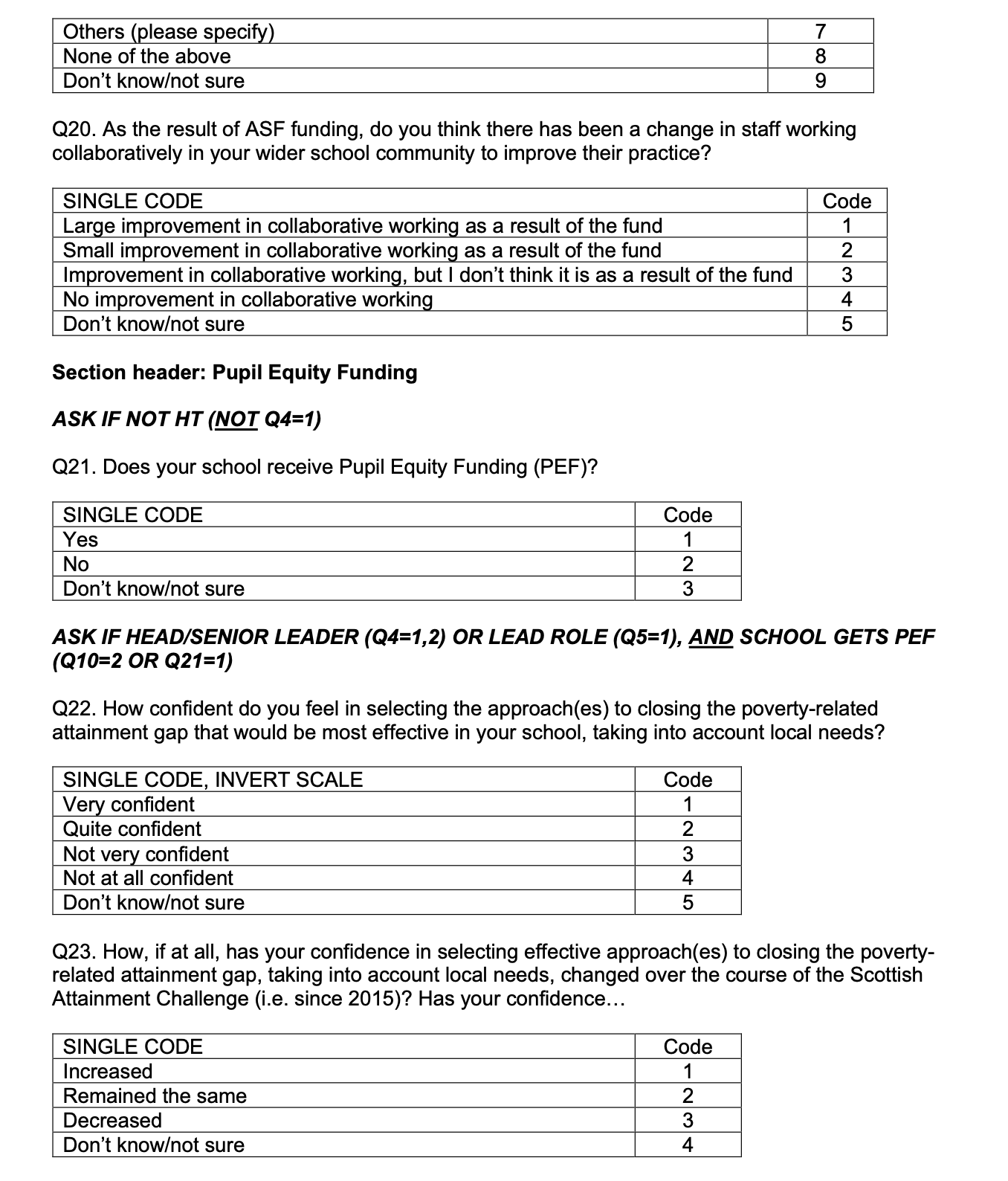

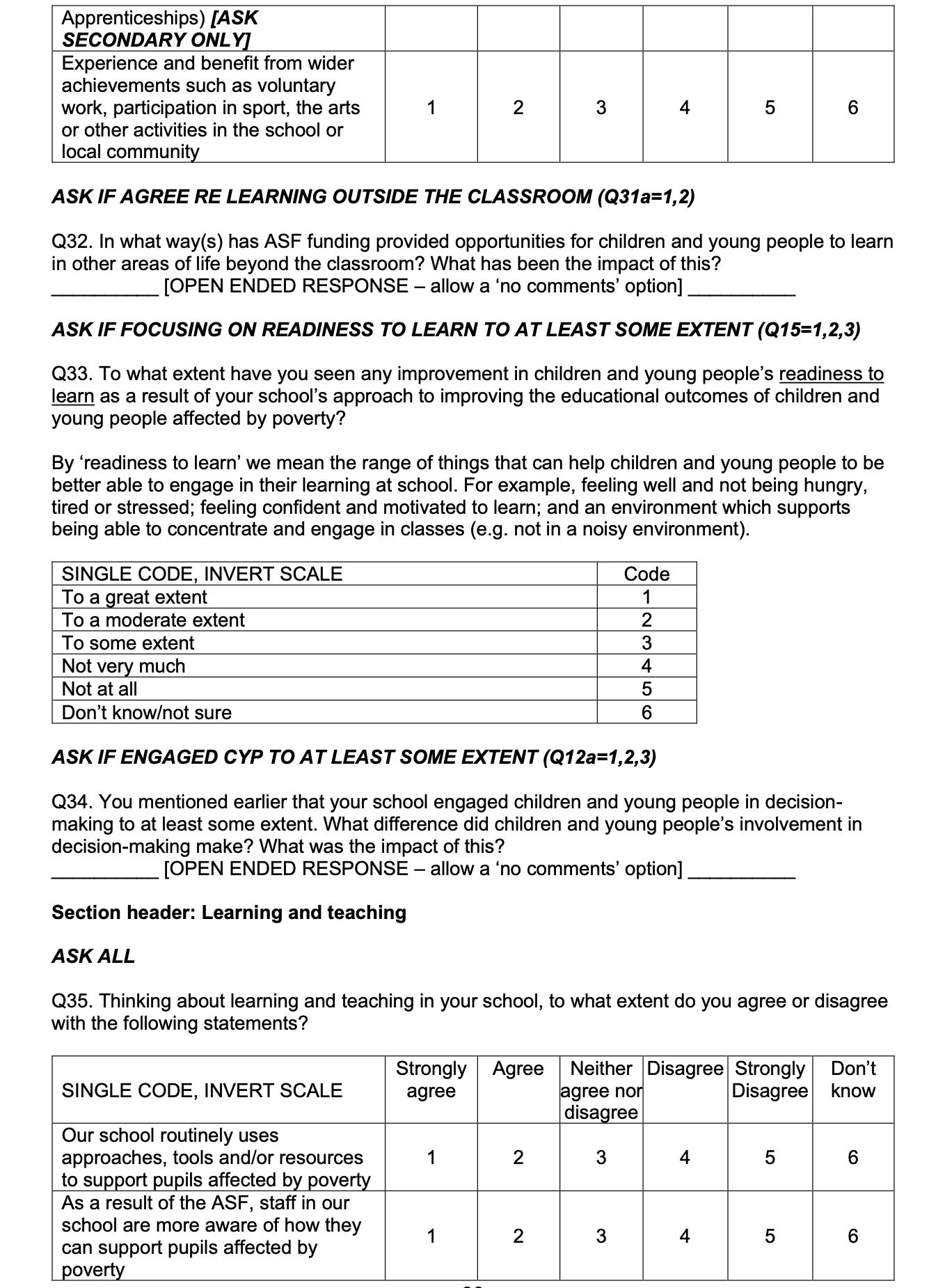

![Page 1 of the survey. Contains the following text: Thank you for taking part in this survey about the Attainment Scotland Fund (ASF). The Scottish Attainment Challenge was launched in 2015 and is supported by the ASF, the collective name for the funding streams that support the SAC (Strategic Equity Funding, Pupil Equity Funding, and Care Experienced Children and Young People Funding). The Scottish Attainment Challenge aims to improve outcomes for children and young people impacted by poverty with a focus on tackling the poverty-related attainment gap between children from the least and most disadvantaged communities.

We are interested in hearing from headteachers, senior and middle leaders, classroom teachers and other school-based staff about your experience of the ASF and any impacts you have seen in your school. Even if you are not working in an ASF related role, we are still interested in your views. Your feedback is extremely valuable in helping the Scottish Government understand how the ASF is being implemented across Scotland and the impact it is having. Findings from the research will provide important learning to support the education system and future policy development in this area.

The survey should take around 10-15 minutes to complete. There are questions on the following topics:

About your school

The poverty-related attainment gap

How ASF has been used (including data and evidence, collaborative working, and PEF)

Impacts of the ASF (including on learning and teaching, and school culture/ethos)

Sustainability.

You can save your responses and return to the survey later if you need to – press the ‘save’ button at the bottom of the page to save and receive instructions about how to resume completion.

At the end, you will have the opportunity to enter a prize draw to win £500 for your school. Terms and conditions for the prize draw can be found here [INSERT LINK].

Your response is strictly confidential. The survey is being run for the Scottish Government by Progressive Partnership, an independent research company. We work in line with the UK General Data Protection Regulation (GDPR), the Data Protection Act 2018 and the Market Research Society Code of Conduct. Please be assured that your confidentiality and anonymity is respected at all times. The Scottish Government will not know who has taken part, and Progressive’s reporting of survey results will make sure that no individual or school can be identified. We will only collect personal data (contact details) if you choose to volunteer to take part in further research at the end of this questionnaire. You can view a copy of Progressive Partnership’s Privacy statement here.

SQ1: Consent

Are you happy to continue with the survey?](/binaries/content/gallery/publications/research-analysis/2025/08/attainment-scotland-fund-evaluation-school-survey-report-2025/SCT0725754512-001_g12.png)

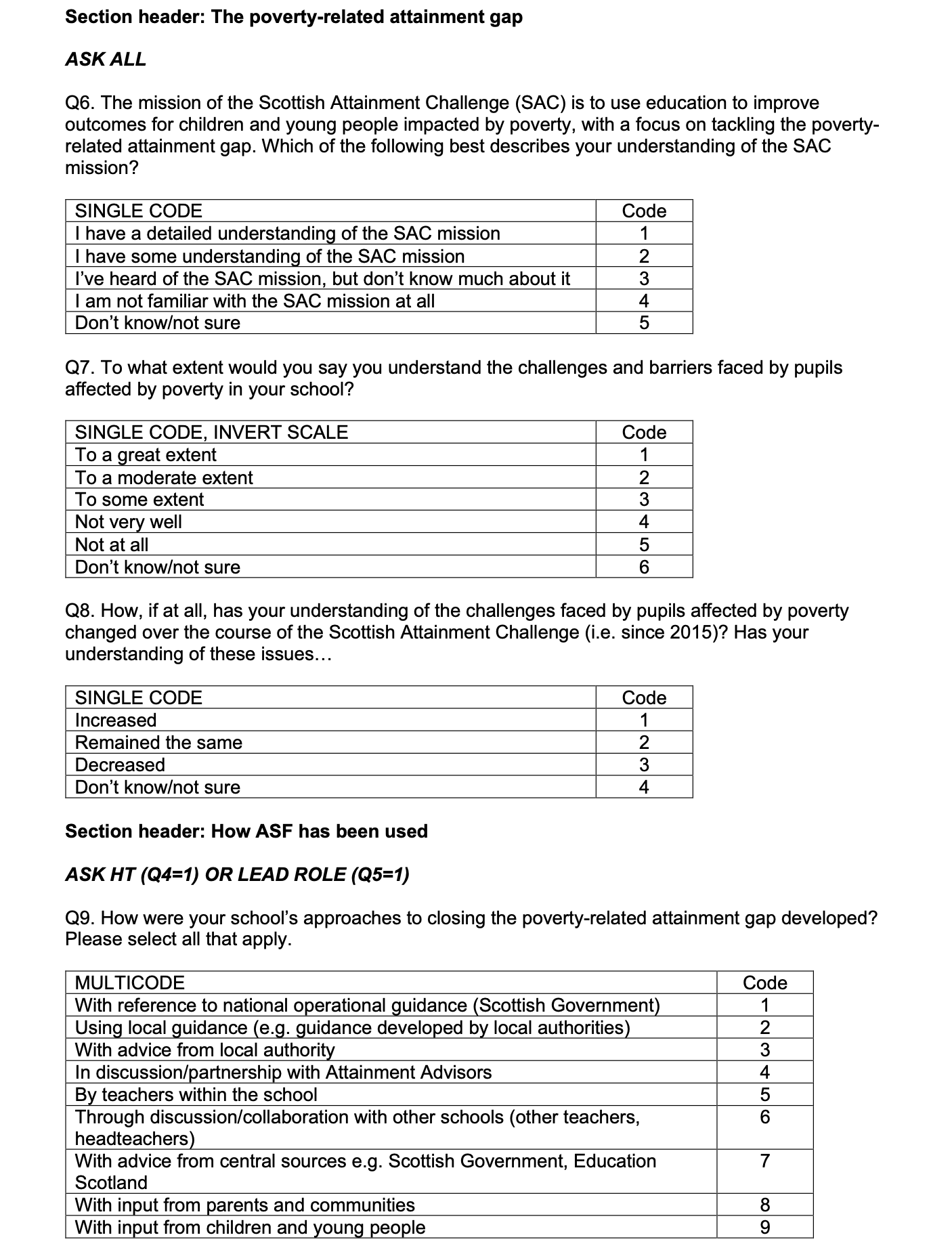

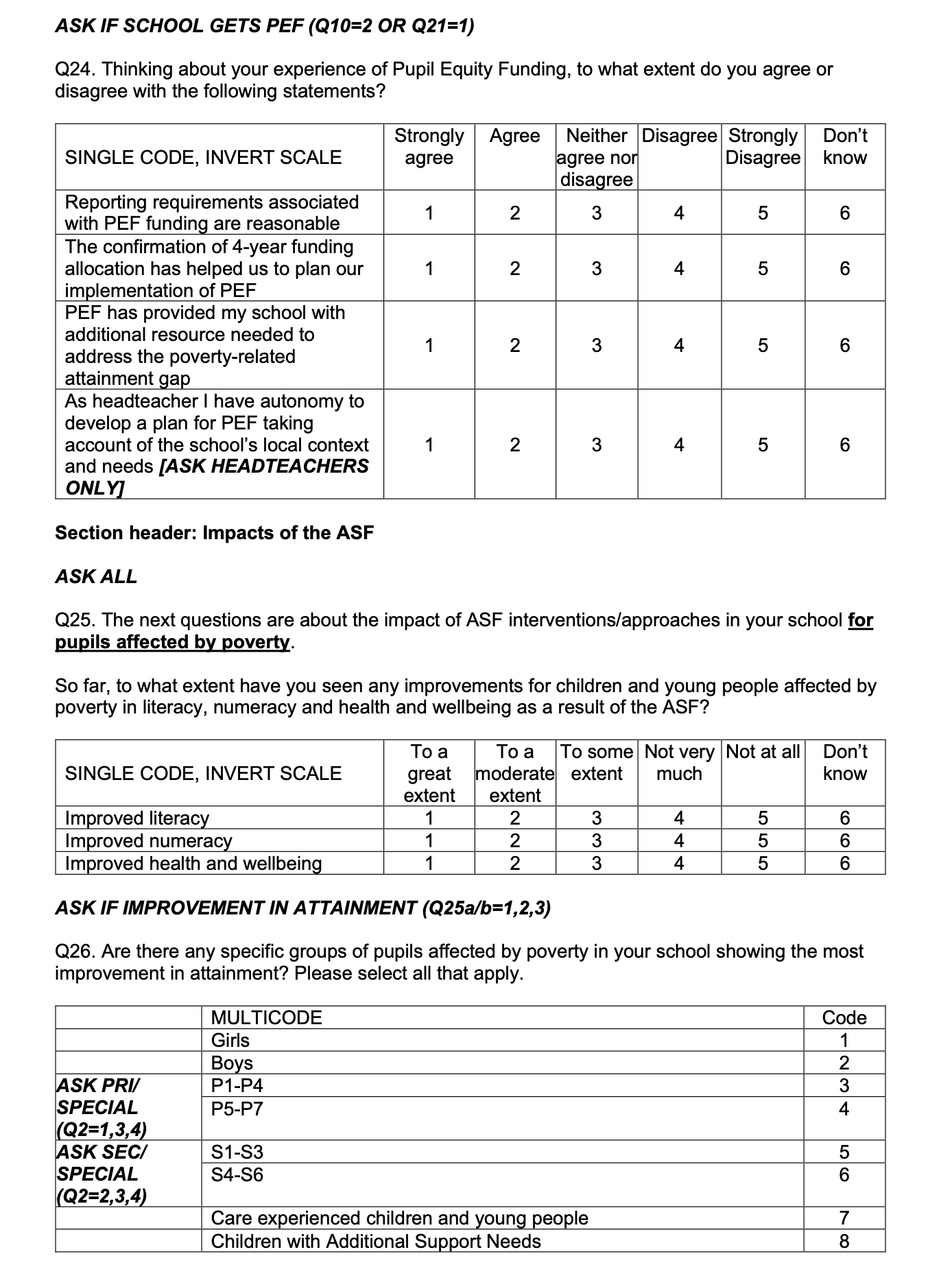

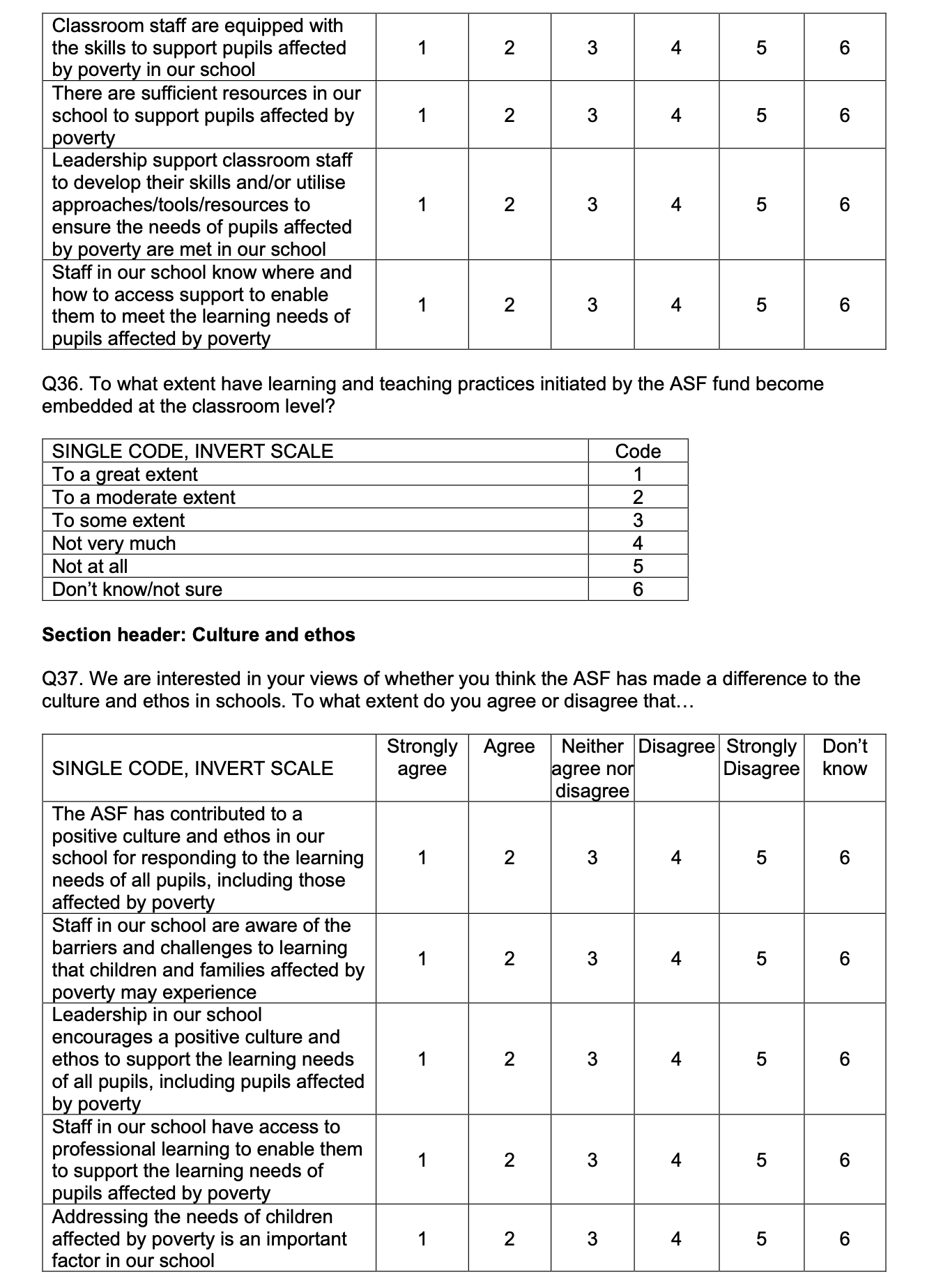

![Page 5 of the survey. Contains the following text: ASK IF ENGAGED CYP/FAMILIES TO AT LEAST SOME EXTENT (Q12a=1,2,3 or Q12b=1,2,3)

Q13. How did you engage with [text substitution: children and young people and/or families/ communities] in decision-making? What did you do? ASK ALL

Q14. Have you taken part in any professional learning in any of the following, in relation to the poverty-related attainment gap? Please think only about professional learning in relation to the poverty-related attainment gap when answering this question. Please select all that apply. Q15. To what extent does a focus on children and young people’s readiness to learn feature in your school’s approach to improving the educational outcomes of children and young people affected by poverty?

By ‘readiness to learn’ we mean the range of things that can help children and young people to be better able to engage in their learning at school. For example, feeling well and not being hungry, tired or stressed; feeling confident and motivated to learn; and an environment which supports being able to concentrate and engage in classes (e.g. not in a noisy environment). Q16. Which of the following, if any, has your school used to address the specific needs of Care Experienced Children and Young People (CECYP)? Please select all that apply.](/binaries/content/gallery/publications/research-analysis/2025/08/attainment-scotland-fund-evaluation-school-survey-report-2025/SCT0725754512-001_g16.png)

Appendix 2: Technical appendix

Method

1. The data was collected by online self-completion survey.

2. The target group for this research study was school-based staff in Scotland.

3. The sampling frame for this study was the Scottish Government’s schools database (the sample source was a database).

4. The sample type was non-probability.

5. All schools on the sampling frame were contacted. Within-school sampling was conducted via instructions to each headteacher sent with the survey invitation, asking them to share the survey with a set number of other staff members (dependent on the size of the school).

6. No target sample size was set due to the sampling approach making it hard to predict how many responses were likely to be achieved. A total of 974 responses were received.

7. On a school, level, the overall response rate was 25%. This response rate is fairly typical for a survey of this kind.

8. Fieldwork was undertaken between 12th March and 23rd May 2025.

9. All persons on the sampling frame were invited to participate in the study. Respondents to self-completion studies are self-selecting and complete the survey without the assistance of a trained interviewer. This means that Progressive cannot strictly control sampling and in some cases, this can lead to findings skewed towards the views of those motivated to respond to the survey.

10. The profile of schools included in the sample is reflective of the overall profile of schools in terms of school type, size, urban/rural classification, SIMD profile etc so the sample is judged to represent the target population well.

11. A number of approaches were used to encourage participation in the survey:

- Using the Education Analytical Services Schools Access Protocol agreed with ADES, Directors of Education were sent early notification of the survey and given the opportunity to opt out of their schools participating.

- A letter from the Scottish Government was sent by Progressive to all headteachers ahead of the fieldwork, highlighting the importance of the survey, requesting their contribution to the evaluation and asking them to look out for the survey email invitation.

- Respondents were able to save responses and return to the survey later, to ensure they could complete it at a convenient time.

- Progressive issued survey reminders via email at agreed points throughout the fieldwork. A total of six reminders were sent following the initial invitation.

- Telephone reminders were also conducted in the second half of the fieldwork period (following the Easter holidays), targeting schools in the local authorities with the lowest response rates. Trained telephone interviewers contacted schools to remind them of the survey invitation and encourage them to take part. In some instances, respondents requested that emails were re-sent to a different address (e.g. a direct email address to the headteacher rather than a general office or admin address).

- The survey was supported by promotion via relevant local authority staff e.g. SAC leads and Attainment Advisors, and through communication from the Scottish Government’s Director of Learning to Directors of Education via the Association of Directors of Education (ADES) encouraging promotion of the survey and highlighting the importance of school staff taking part.

- Originally, a unique survey link was issued to each school, allowing completions by staff at each school to be monitored automatically. However, feedback from LA staff suggested that responses may be encouraged if they could share the survey link directly with schools. An open survey link was therefore generated, and shared with LA staff for dissemination in addition to the unique school links. This meant that additional survey management was required to match responses from the open link to the sample database (based on information collected at the start of the survey about school name and postcode/address), but allowed wider sharing of the link by SG/LA contacts as well as via Progressive’s survey system.

- Fieldwork dates were extended towards the end of the survey, to allow as much time as possible for people to respond. A one-week extension was added to the original survey period.

- Respondents were invited to enter a prize draw to win £500 for their school.

12. Data gathered using self-completion methodologies was validated using the following techniques:

- Usually, online surveys using client lists use a password system and/or unique links to ensure that duplicate surveys are not submitted. The sample listing is also de-duplicated prior to the survey launch. However, due to the need to share links within schools for this survey, multiple responses were permitted via each school link. Data was validated by checking school names and postcodes provided in the survey against the sample list.

- Where some profiling information has been provided on the sample list, this is also checked off against responses where possible to validate the data.

13. All research projects undertaken by Progressive comply fully with the requirements of ISO 20252, the GDPR and the MRS Code of Conduct.

Data processing and analysis

14. The sampling for this study was non-probability, so we cannot provide statistically precise margins of error or significance testing. The margins of error outlined below should therefore be treated as indicative, based on an equivalent probability sample. The overall sample size of 974 provides a dataset with an approximate margin of error of between ±0.62% and ±3.14%, calculated at the 95% confidence level (market research industry standard).

15. The following methods of statistical analysis were used: Z tests.

16. Only significant differences are reported (at the 95% level, i.e. results indicate 95% confidence that the difference is not due to chance or sampling error). Not every significant difference is noted – results are highlighted where they are notable/meaningful, part of a clear pattern of results across the reporting as a whole, and/or where they add insight in relation to the research objectives.

17. The data processing department undertakes a number of quality checks on the data to ensure its validity and integrity. For online questionnaires, these checks include:

- Responses checked for duplicates where unidentified responses are permitted.

- The raw data is monitored throughout fieldwork to check for flatlining responses, quality of open-ended responses and speed of completion. Rules will be agreed with the DP team at the start to determine when to exclude data based on these checks.

18. Other data checks include:

- Every project has a live pilot stage, covering the first few days/shifts of fieldwork. The raw data and data holecount are checked after the pilot to ensure questionnaire routing is working correctly and there are no unexpected responses or patterns in the data.

- A computer edit is carried out prior to analysis, involving both range (checking for outliers) and inter-variable checks. Any further inconsistencies identified at this stage are investigated by reference back to the raw data where possible.

- Where an ‘other – specify’ codes is used, open-ended responses are checked against the parent question for possible up-coding.

- Responses to open-ended questions will be spell and sense checked. Where required these responses may be grouped using a coding frame, which can be used in analysis. The code frame will be developed by the executive or operations team and will be based on the analysis of minimum 50 responses.

- Open-ended coding is validated using a dependent approach, whereby a second person has access to the original coding and checks a minimum of 5% of cases coded. Once responses are fully coded and validated, the completed code frame is given a final check by the Executive responsible for the project, and any queries or amends are passed back to the Data Project manager.

19. A SNAP programme was set up with the aim of providing the client with useable and comprehensive data. Crossbreaks were discussed with the client in order to ensure that all information needs were met.

20. Some partial completions were included, where respondents had completed up to and including all of the questions on impact of the ASF. This means that base sizes for the final section on sustainability vary slightly.

Contact

Email: Joanna.Shedden@gov.scot