Citizens' Assembly of Scotland: research report

Findings of a collaborative research project led by a team of Scottish Government Social Researchers and independent academics from the Universities of Edinburgh and Newcastle, on Scotland’s first national Citizens’ Assembly

Chapter 2: Learning and Opinion Formation during the Assembly

Research questions:

a) To what extent do participants share an understanding of the task?

b) Does participants' knowledge increase?

c) Do opinions related to the set task evolve?

d) What are the critical learning points in the process?

e) What are the critical opinion-formation points in the process?

Data sources:

- Member survey

- Expert speaker survey

- Internal interviews (organisers, facilitators, stewarding group)

- External interviews (politicians, journalists, civil servants)

- Fieldnotes

In citizens' assemblies, participants should be exposed to a range of information, from a range of speakers, but also from each other. Ideally this should enable them to learn more about the topic under consideration (Roberts, et al., 2020). In turn, this learning can lead Assembly members to reflect on their views on the issue and even change their views in light of this information, which means the recommendations an assembly produces can be based on evidence and considered opinion (Thompson, et al., 2021). In this chapter we cover the learning opportunities the Assembly members were afforded and the extent this influenced their views on the topics addressed by the Assembly. We then move to establish which elements of the Assembly had the greatest impact on learning and opinion change. We start though with a discussion of whether the Assembly members shared an understanding of the task. To answer these questions we draw on data from the member survey, expert speaker survey, internal and external interviews, and the non-participant observation fieldnotes.

Shared understanding of the task

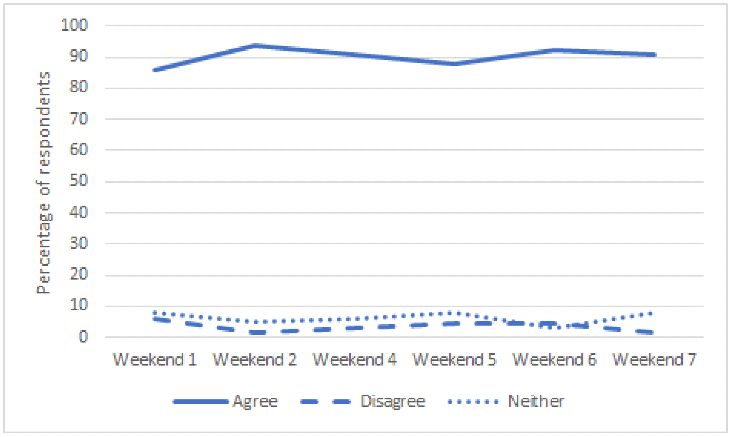

For coherent learning and opinion formation to occur across the Assembly as a whole, it is important that the members share an understanding of their role in the Assembly and for each weekend that they meet. We asked the Assembly members about this in our survey (see Appendix C). Figure 11 shows that their general understanding of the task was fairly consistent across the weeks, with over 86% either 'tending to agree' or 'strongly agreeing' that they understood what was expected of them every weekend. This understanding also increased as the Assembly progressed as in weekend 1, 44% 'strongly agreed' and by weekend 8 this rose to 72%.

Source: Member survey (Question: I understand what I am expected to do over the coming Assembly weekends)

This is supported by the fieldnotes. Interviewees did not really dwell or elaborate on this point much as they overwhelmingly had the impression that members as a whole understood the overall purpose of the Assembly and their role within it. A very small number of interviews across the groups alluded to an initial period of uncertainty about the process, after which members became familiar and comfortable about what was asked of them. The fieldnotes also noted there was a considerable level of confusion about the remit, particularly during the first half of the process. Members very seldom referred to the three-pronged remit of the Assembly during their deliberations, which suggests that it was not necessarily seen as particularly useful or usable in guiding their work.

Does participants' knowledge increase?

Due to the method of recruitment, participants in a citizens' assembly typically have a diverse range of knowledge on the issues to be considered, with some knowing little or nothing at all, meaning there is a need for learning to be built into the process (Roberts, et al., 2020).

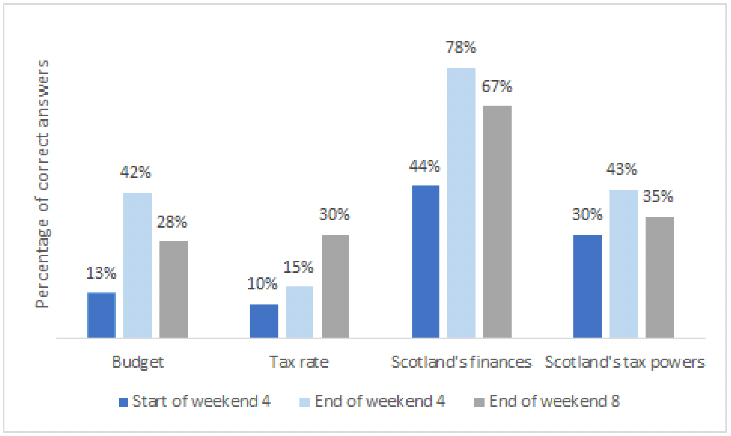

In our member survey we asked multiple choice questions about Scotland throughout the Assembly process to identify changes in objective knowledge (see Appendix C). The graph in Figure 12 shows the percentage of correct answers to questions asked about the Scottish budget, tax rates and tax powers, and Scotland's general financial situation. In the case of all four questions, when asked about these topics before learning about them at the Assembly, those that answered correctly were in the minority. Directly after weekend 4, when the Assembly focused on these issues, the percentage of correct answers increased. However, only when asked about Scotland's general finances did over 50% of respondents answer correctly. Finally, after the last Assembly session, members were asked the same questions again. There was a drop in the percentage of correct answers for three of the questions, which is understandable given that almost a year had passed since the evidence sessions covering the topic. However, there was an increase in the percentage of respondents able to remember specific income tax rates. Overall, Figure 12 demonstrates an increase in knowledge as a result of the Assembly, with two caveats. Firstly, although knowledge increased, in most cases more than half of Assembly members still answered questions incorrectly after an evidence session on the topic. Secondly, in most cases there was a decrease in knowledge retention over time.

Source: Member survey

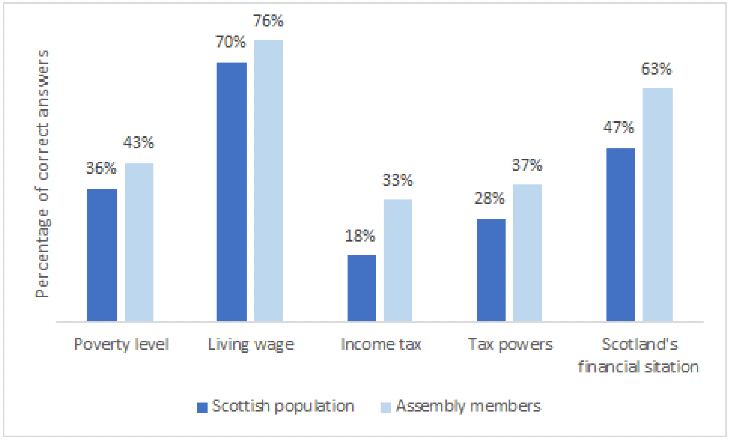

Figure 13 below shows a direct comparison of the objective knowledge of Assembly members (in weekend 8) and members of the general population on a wide range of topics. The chart clearly shows that consistently Assembly members on average had more objective knowledge by weekend 8 of the process. It is important to note that this trend is not visible when one compares the knowledge of Assembly members at the beginning of the process to the general population: Assembly members tended to be around equally, or less, knowledgeable than the general population before discussing an issue in the Assembly. This suggests that on average objective knowledge is gained through participation in the Assembly.

Source: Member and population surveys

The Assembly members' own perceptions of their learning support these findings. After the last Assembly session, they were directly asked if they felt they had learned during the Assembly. All but one respondent 'tended to' or 'strongly agreed' that they had. Several questions also measured how much people's subjective knowledge on various topics increased (see Appendix C). In weekend 1 and weekend 8 respondents were asked, "How much, out of 10, do you feel you know about life in Scotland." The mean response increased slightly from weekend 1 to weekend 8: 7.34 to 7.83. What is more interesting is the change for individuals: 17% of respondents' subjective knowledge scores decreased, for 42% the scores remained the same, and for 41% the scores increased. On this measure overall participants' knowledge increased, but not for each individual.

In weekends 2 and 4, pre and post surveys asked specific questions about the topics focused upon (see Appendix C). In weekend 2, respondents were asked about how much they felt they knew about wellbeing, quality of life, and values. 67% of respondents showed an increase in subjective knowledge for each topic, with ranges from 9% to 13% showing a decrease in subjective knowledge. In weekend 4 the questions focused on tax and public spending. For all questions, the vast majority of respondents showed increased subjective knowledge, with 78% reporting increased knowledge on Scottish tax powers. This large increase in knowledge could be attributed to the fact that most respondents did not know very much about Scottish tax powers to begin with. Many open text responses in the survey supported the conclusion that the members learnt a lot: "I feel I have learned a lot through being part of the Citizens' Assembly" and "I have enjoyed learning more about Scotland, good or bad, and seeing the progress we've made after each meeting".

Our survey of expert speakers also indicates that they thought the Assembly members were engaging well with the information provided. The majority of respondents expected lower levels of engagement from members than they experienced, and several expressed being pleasantly surprised by members' interest and the diversity of queries and opinions:

'I slightly underestimated the level of engagement and self-reflection that members would have. And was delighted that my experience exceeded my expectations.' (Expert speaker survey)

Members' questions were described as 'pertinent', 'thoughtful' and 'generally considered':

'Questions were more detailed and engaged than I had expected.' (Expert speaker survey)

'Some excellent questions and comments from diverse perspectives – which was very reassuring.' (Expert speaker survey)

In order to further explore changes in the subjective knowledge of Assembly members, regression models were run comparing knowledge of topics before and after an Assembly session. The predictors used were demographic features and indicators of social class such as income and education. In weekend 2, evidence of democratisation of knowledge was found: before the Assembly session income and education were significant predictors of knowledge about values and wellbeing but after the session lost their significance. However, the same pattern did not occur in weekend 4 when discussing knowledge about taxes where income and education remained significant predictors of knowledge. This could be because how much a person knows about taxes will likely be directly related to their income.

Indeed, our interviewees suggested that catering for different forms of learning needs could have been improved. The evidence sessions required a great deal of concentration from members and may initially have been pitched at too technical a level to be accessible to all. The conveyed impression is that the format and pitch of evidence sessions improved as the Assembly progressed, as a result of which members were more able to engage with the information. Facilitators in particular report that the experience was both challenging and enjoyable for members.

One of the challenges to learning identified was the amount of time that passed between evidence sessions and agreeing on recommendations, due to the long break in the Assembly meetings caused by the COVID-19 pandemic. This made it more difficult for members to both recall and engage with the evidence in crafting recommendations. A few of the interviewees across the groups questioned the extent to which the recommendations were based on and informed by the evidence presented:

'I think the problem was that when you were trying to bring in material in a later weekend that had been presented in an earlier weekend people really struggled to remember.' (Facilitator, internal interviews)

'[I]t was a struggle for members a little bit to have that gap from when they heard the core evidence on a lot of the issues that we made recommendations about, and then coming back six months later.' (Organiser, internal interviews)

A few internal interviewees reflected that the breadth of the remit created challenges to planning a learning journey, possibly resulting in members engaging with evidence either inconsistently or in a fragmented way. Indicative comments on this topic from the organisers included:

'Some of the evidence was quite sort of unfocused as to what point it was there for … [T]here wasn't a clear narrative of where it was going to end, which … actively encouraged people to just pick up on something that grabbed their attention and focus in on that.' (Organiser, internal interviews)

'[Q]uite how you design a learning programme for this kind of broad remit citizens' assembly … that's one of the things that I don't really know.' (Stewarding group member, internal interviews).

Some of our external interviewees also thought that the broad remit of the Assembly had a negative effect on the provision of evidence and information as it meant that so many different topics needed to be included:

'You want to expose people to expertise and to … specific knowledge that they might not have had ready access to in the past … [I]f the subject is very broad then the material that people have to absorb is just overwhelming.' (Politician, external interviews)

'[T]he way it is just now I think they are being asked to deal with too much. They have got too much data.' (Journalist, external interviews)

Similar concerns were noted in the fieldnotes. It seems apparent that stimulating assembly member learning and supporting knowledge gains is a complex matter linked to key aspects of process design – i.e. clarity and focus of the task, clear link between the evidence sessions and the issues to be deliberated on, etc. For example, if members do not know what information and evidence they may need later in the process, it is difficult for them to purposefully retain and develop a particular understanding of an issue. The broad remit of the Assembly made it more challenging to 'design in' the architecture of incentives for the learning phase, because the issues that were covered in subsequent sessions were unknown so it made it difficult for participants to know what evidence from the early sessions would be relevant to deliberations in later sessions.

Do opinions related to the set task evolve?

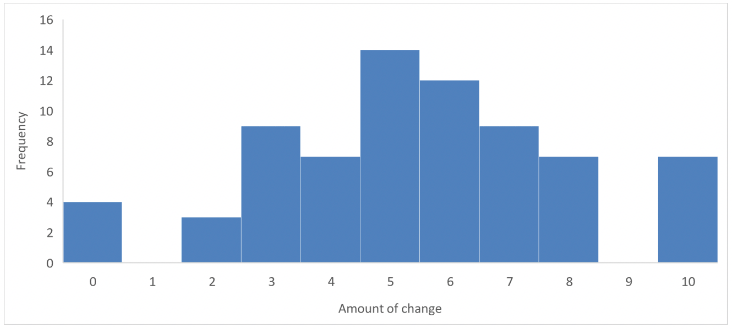

As assembly members tend not to have firm views about the issues they have been assembled to discuss, when they do learn more this can stimulate opinion change on the issue (Thompson, et al., 2021). In order to assess opinion change, in weekend 8 Assembly members were asked to report how much they felt they had changed their minds about issues discussed during the Assembly, with 0 indicating no change and 10 indicating "a great deal". The histogram below in Figure 14 shows a fairly even split in the extent of change reported, with a mean of 5.23.

Source: Member survey (Question: How much have you changed your mind about the issues discussed during the Assembly?)

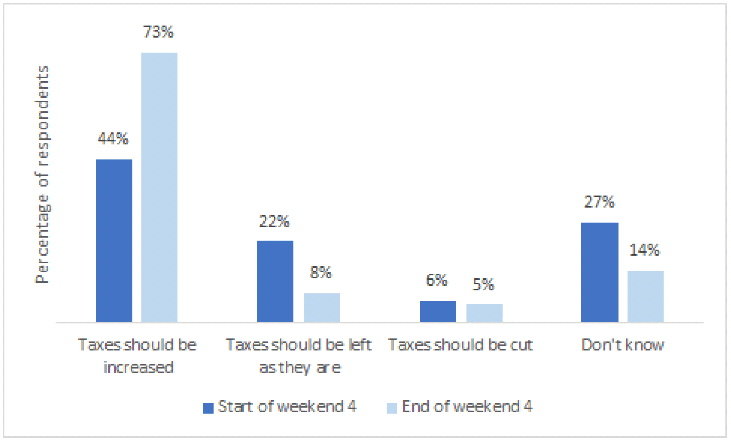

A specific example of this attitude change at work occurred in weekend 4 which focused on discussing tax in Scotland. Figure 15 below shows the way Assembly members' attitudes changed drastically between the start and end of the weekend. Fewer reported not knowing how they felt about the issue and generally members ended up favouring an increase in taxes rather than no change or cuts.

Source: Member survey (Question: Which of these statements comes closest to your own view?)

Interestingly neither demographic factors, nor issue opinions, had a significant effect on attitude change in individuals. However, the fairer a member thought the process was and the more influential they thought they were, the more likely they were to have changed their opinions significantly during the Assembly. This suggests that regardless of social groupings before the Assembly, engagement with the process itself had the power to significantly change people's opinions.

What are the critical learning points in the process?

We have established that the Assembly members learnt as the process developed. In this section we explore which parts of the Assembly process were most critical in achieving this learning.

The fieldnotes include comments on numerous instances when members shared insights that they framed as newfound knowledge or perspectives. Some members did explicitly acknowledge that they learned a lot throughout the process (from speakers and members); and that this was both challenging, eye-opening, and enjoyable. It seems that members were learning as much, if not more, from listening to each other's experiences and arguments, as they were learning from the more formal evidence sessions. This suggests that "knowledge" travels and evolves in interesting ways within the Assembly process. For example, points made by some speakers were sometimes landing better once they were translated by members and brought into the deliberations alongside other forms of knowledge provided by members (e.g. local, experiential, professional).

The fieldnotes further show that a wide range of evidence, and different types of knowledge, were mobilised in different ways and at different stages of the Assembly. Some members wanted to find out more about what is happening in Scotland in ways that are more connected to 'the real world' (i.e. less abstract and more tangible). In some groups, the conversations only got going properly when they managed to connect evidence to their own experiences. There were numerous instances when members contributed evidence from their own experiences (e.g. getting into debt with credit cards and paying interest for a long time; loss of employment, especially during the pandemic).

The research indicates that despite members' self-reported levels of satisfaction and understanding, the presentations and information packages were of variable accessibility. A number of factors contributed to this. The breadth of the remit required members to consider evidence on a wide range of topics within a relatively short timescale, resulting in several presentations within the day.

Some interviewees across the different groups (organisers, facilitators, stewarding group members and expert speakers) thought the format and pitch of the information created challenges and suggested that catering for different forms of learning was limited. For example, various facilitators noted that the didactic ("talking head") format of the evidence sessions required a great deal of concentration from members:

'I think the other big thing would have been, a different form of information giving for people. You know, that from the front, very didactic thing … I know that didn't suit people … [O]nce we were in the lecture theatre, I sat next to someone explaining what was being said from the front … and I could see him just being overwhelmed.' (Facilitator, internal interviews)

'[B]ecause everyone engages differently, everyone learns differently, everyone listens differently, everyone receives information differently and everyone likes to share opinions and information differently. You've got to use a mix.' (Facilitator, internal interviews)

'I did think it a bit odd that despite us consistently saying that folk were spending too much time listening to talking heads, that we still did more of that. I do understand that the evidentiary process needs people to impart knowledge, but I did also think that we were consistently getting a picture that citizens weren't keen on reading things and were uncomfortable sitting for too long, and therefore it seemed a bit crazy to keep doing that.' (Facilitator, internal interviews)

More participatory forms of learning were encouraged by the research team but were not widely adopted, in part due to time constraints. This was despite feedback about the exercise organised as part of the evidence session on taxation in weekend 4 indicating that members were receptive to other forms of learning:

'[I]n terms of the accessibility and the different learning styles, it was something we were maybe conscious about at the beginning and we probably would have liked to do more. Present evidence in more different types of ways … so doing more things like the tax game, doing more of that kind of creative evidence presentation, because we recognised that we were doing things in quite an old-fashioned, someone stands up and talks then you sit around and talk about it, and that is not a good learning style for everyone.' (Organiser, internal interviews)

Question and Answer sessions were considered especially beneficial for member engagement, allowing expert speakers to either clarify aspects of the presentations that had not been fully understood or to provide "basic" explanations that had been left out of the presentation due to being assumed to be general knowledge. Taken in combination with other sources of data, this strongly suggests that some of the presentations were initially pitched at too technical a level to be widely accessible, with expert speakers reporting that this was one of the main challenges to preparing for their involvement.

In addition, member engagement level depended considerably on expert speakers' individual relatability and communication skills, which is in line with findings from previous studies (Roberts & Escobar, 2015; Roberts, et al., 2020). Organisers reported that the format and pitch of evidence sessions improved over the course of the Assembly, due in part to feedback from the research team and the Member Reference Group.

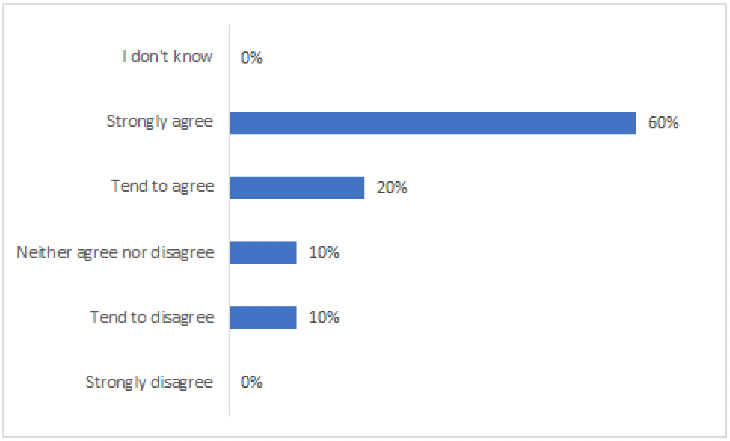

While all expert speakers agreed they understood their role within the Assembly process (80% strongly agreed), the responses indicated that 30% did not feel entirely adequately briefed about what was required from their contribution (Figure 16).

Source: Expert speaker survey (Question: I was adequately briefed about what was required from my contribution)

This view that the expert speakers were well briefed is further evidenced by qualitative responses to questions within the survey:

'One of the things that impressed me … was the amount of work put in by the secretariat to make it work effectively – that includes the preliminary discussions with speakers, the briefings of speakers about what was wanted and how the Assembly worked, and the consideration about the sessions and what could/should be learnt from the sessions.' (Expert speaker survey)

'It was extremely well organised and I felt well supported throughout. The information provided in advance was comprehensive and clear.' (Expert speaker survey)

The two main areas for improvement suggested by expert speakers who felt they were not adequately briefed were more clarity on what the contribution would involve and more time to prepare:

'It felt a bit rushed – more time could have been given to discuss with other presenters what they were going to do on the day.' (Expert speaker survey)

'[A]n improvement would have been longer lead times and more clarity from the outset about what I was expected to contribute.' (Expert speaker survey)

Some frustration was expressed at having the specifications for the contributions changed or expanded under short notice:

'The request to be involved came quite late, the nature of that request (what inputs I was required to give) changed (and expanded).' (Expert speaker survey)

'I think the only frustrating element was that some of the Assembly activities were clearly being made "on the hoof".' (Expert speaker survey)

'Ideas about process/format changed within a short space of time. This didn't have a massive impact on my preparation but left a slightly nagging feeling of uncertainty.' (Expert speaker survey)

For the first half of the Assembly, formal evidence presented and written by various speakers seemed more prominent, although members sometimes did seek to relate it to their own expertise and experience. For the second half (online stage), experiential, professional and local knowledge became more prominent. The fieldnotes offer a very limited indication that members engaged with evidence and other material in the periods of time between Assembly weekends; but there are exceptions and some members seemed particularly well prepared towards the final sessions of the Assembly while developing recommendations.

What are the critical opinion-formation points in the process?

We have established that Assembly members changed their views on various issues being considered in the Assembly. In this section we consider which parts of the Assembly were the most crucial for these opinion changes.

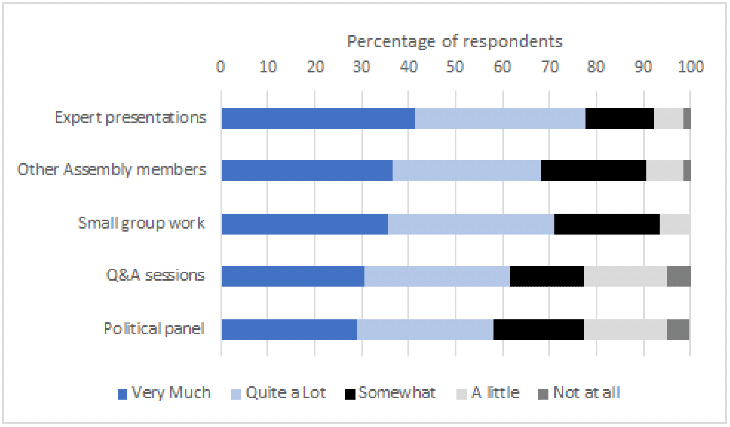

When asked which Assembly activities contributed to any change in opinion, generally respondents seemed to be most influenced by the expert speaker presentations and least so by the political panel. This suggests that Assembly members found experts more persuasive than politicians. Regarding the influence of deliberation within the group on changing opinions, members did report being influenced by each other and their small groups, only slightly less so than by the expert presentations. Of particular interest is the fact that not a single member responded that small group discussions did not influence their opinions at all (see Figure 17).

Source: Member survey (Question: How important were the following activities for changing your mind?)

In line with the member survey data, the fieldnotes also suggest that listening to the diverse experiences and perspectives of other members was especially critical in opinion-formation. This seemed particularly so once the Assembly reconvened in the context of the pandemic, which seemed to be a turning point in influencing the types of reflections and strength of propositions made by Assembly members. The fieldnotes suggest that some of the evidence sessions were very influential, judging by some of the issues emphasised during group discussions (e.g. poverty, taxation, redistribution, and green policies) and language used in many contributions and statements (e.g. 'wellbeing economy', incentives and disincentives for the right/wrong behaviours by individuals and companies).

The Assembly's organisers interviewed raised questions about the extent to which members were exposed to a diversity of viewpoints or a balance of evidence. The breadth of the remit, the timescale for preparation and the composition of the stewarding group (who did not feel able to vet the evidence or identify speakers from a position of expertise) were all identified as creating challenges to planning the evidence sessions. One indicative comment on this topic was:

'I fear that the Citizens' Assembly had an inadequate exposure to the breadth of ideas, the breadth and depth of ideas. I felt it had a particular orientation, and I'm not surprised at the outcomes of the Citizens' Assembly. It would be unfair, perhaps, to suggest that the topics and the speakers effectively predetermined, or primed the outcome, but I remain concerned about whether we really exposed the Citizens' Assembly to the breadth of academic, policy, philosophical, and other thinking which is out there on the topics.' (Stewarding group member, internal interview)

Some of our interviewees also indicated that there was insufficient time given to recruit expert speakers and that this in turn had an impact on the quality of the evidence sessions.

Similarly, some fieldnotes do flag that the range of issues and speakers may be questioned in terms of whether there was enough representation of neoliberal and conservative perspectives, albeit some speakers did offer moderate takes on taxation and economic policy.

Conclusion

In a citizens' assembly, it is important for the participants to learn more about the issues being covered, and for this learning to have a bearing on their views on these issues. In the Assembly, information was provided through a range of experts and advocates on various issues. There is evidence to suggest that the Assembly members did learn from the expert speakers and from each other and that this had a bearing on their opinions. Moreover, it seems apparent that by the end of the process they knew more than the average member of the public about these topics.

Nevertheless, the learning was limited for a number of reasons. Firstly, the remit of the Assembly was very broad. This meant it was difficult for the organisers to decide what topics the Assembly members needed information on, but it also meant it was challenging for the Assembly members to know what information would be needed later in the Assembly process. Secondly, the long gap in the Assembly, prompted by the COVID-19 pandemic, meant that the Assembly members struggled to recall some of the information provided in the early stages of the Assembly when they moved online to form their recommendations. As a result, some of the final Assembly's recommendations are not based on evidence provided in the Assembly. Thirdly, there is some concern about the diversity of the perspectives provided by the expert speakers, compounded by short timescales to recruit experts. Although most of the expert speakers that were recruited felt they were well briefed, others were frustrated that their remit would change at short-notice. Fourthly, the approach to evidence provision lacked diversity which could have hindered the learning of some members. More opportunities for interaction between Assembly members and expert speakers could have helped.

Contact

Email: socialresearch@gov.scot