Rural affairs, food and environment research programme 2016 to 2022: evaluation report

This report presents the findings of an evaluation into the impact from the rural affairs, food and environment research programme 2016 to 2022.

3. Approach to the Evaluation

3.1. Overview

This section briefly describes the approach to the evaluation from project inception to reporting. Additional information is available in the technical annexes.

3.1.1. Project inception

The RPA study team held an online inception meeting with the Scottish Government. Discussions covered the aims and objectives, as well as the proposed approach to the evaluation.

3.1.2. Development of the evaluation framework

Following project inception, the study team developed an evaluation framework to provide the structure for the evaluation. This required the development of a Theory of Change (ToC) and an evaluation framework (including the evaluation questions and indicators).

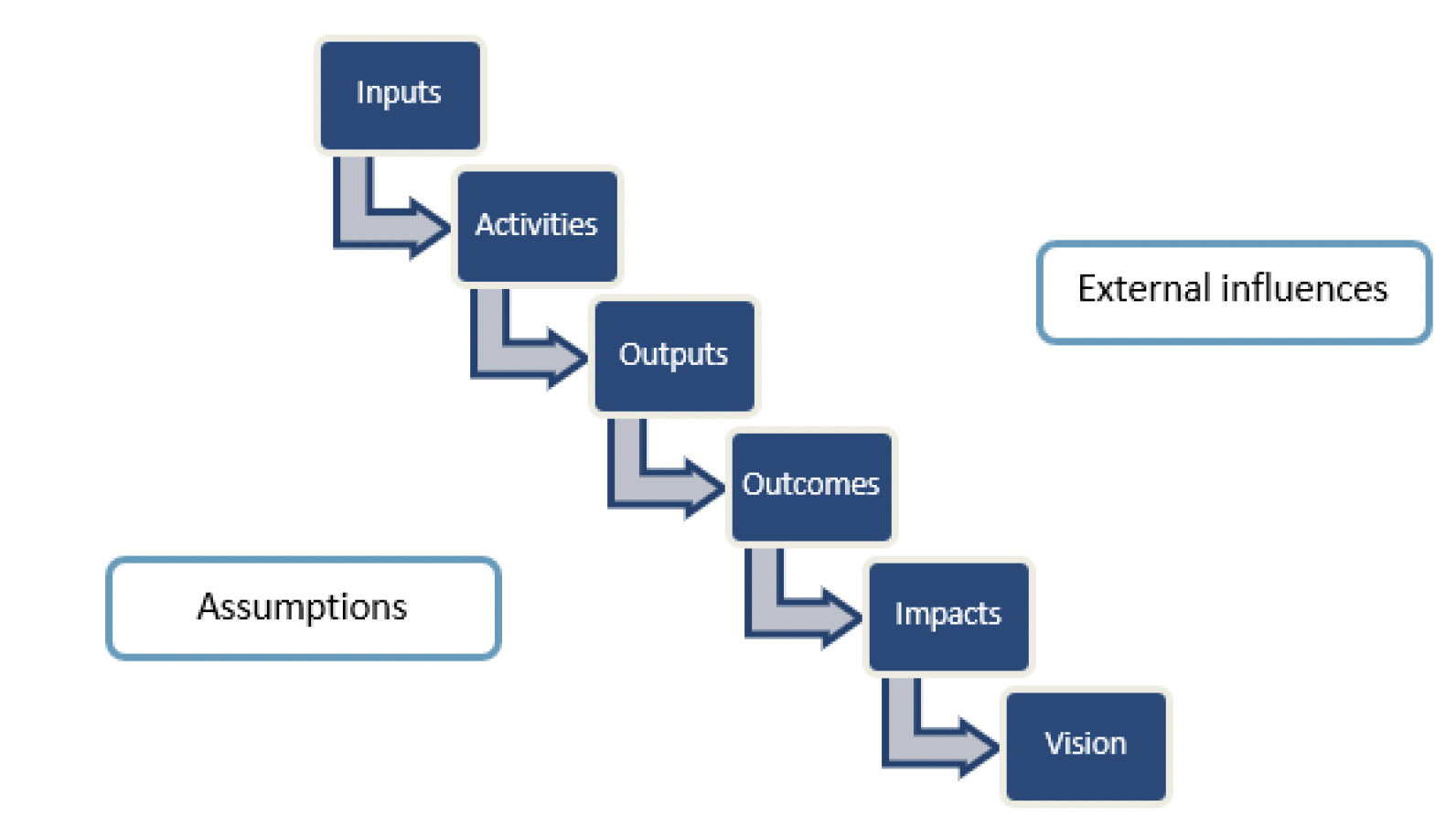

The RPA study team collected publicly available information about the Strategic Research Programme (SRP), such as the annual Research Highlights published by SEFARI (Scottish Environment, Food and Agricultural Research Institutions). The study team used this information to develop an outline ToC. Figure 3-1 shows the key components of information gathered in the TOC, a ToC aims to show the causal chain from inputs through to the vision[8]. It enables the identification of any external influences and assumptions that affect this chain.

For this evaluation, the ToC intended to show the way in which the Programme was expected to work to achieve the vision set out in the Strategy. The process of developing the ToC also enabled the project team to increase their understanding of the SRP and the Strategy. Scottish Government stakeholders worked through the ToC components and discussed any assumptions and external influences that could affect progress towards the vision. The final ToC can be found in Section 2 of the technical annexes.

Drawing on the ToC, the study team developed a set of high-level evaluation questions based on the Strategy and the aims and objectives of the evaluation. The bullets below presents the evaluation questions. Questions are coded as to whether they are process questions (P), impact questions (I) or questions related to the Covid-19 extension year (2021/22) (C).

- P1: To what extent did the delivery team structure enable effective and efficient operation of the Programme?

- P2: To what extent were resources effective in facilitating the Programme's delivery?

- P3: To what extent was the funding application process effective and efficient?

- P4: To what extent were monitoring and reporting requirements effective?

- I1: Has the research been delivered in line with the vision?

- I2: To what extent has the Programme helped deal with challenges faced by the Scottish Government?

- I3: Which of the national outcomes identified in the Strategy has the Programme contributed towards, and to what extent?

- I4: What environmental, economic and social impacts to Scotland did the research themes achieve?

- I5: What 'ways of working' did the research Programme enable?

- I6: What is the estimated economic impact of the Programme?

- C1: How was the extension year designed, administered and implemented?

- C2: What impacts did Covid-19 and the UK leaving the European Union have on the extension year's administration?

- C3: To what extent did research funded through the Programme extension look at the impacts of Covid-19, the recovery from Covid-19 and data emerging from the Covid-19 pandemic?

- C4: To what extent did the Programme extension support the recovery of research and experiments that had been affected by the pandemic?

- C5: To what extent did research funded through the Programme extension look at the impacts of EU-exit?

- C6: To what extent was the Programme extension effective? What impacts did it have beyond those relating to Covid-19, the recovery of projects and EU-exit?

To develop the evaluation framework, the study team identified indicators for each question along with relevant data sources. Analysis methods were also added, taking account of the types of data required (e.g. interview evidence). The full evaluation framework, including the indicators, data sources and analysis approach is provided in Section 3 of the technical annexes.

3.1.3. Desktop assessment

The desktop assessment involved reviewing the Programme data, collating information on Programme inputs and outputs, and carrying out initial analysis to provide partial answers to some of the evaluation questions.

The study team reviewed the documentation received from the Scottish Government using a spreadsheet based proforma. The proforma was set up to enable assessment of all documents to determine funding and in-kind inputs (e.g. staff time), research activities and outputs (scientific publications, policy outputs, etc). The assessment also identified initial benefits from the research (outcomes) as well as potential longer-term benefits (impacts). This step provided a searchable resource for the evaluation going forwards.

3.1.4. Engagement

The evaluation aimed to gather information from stakeholders involved with the Programme through a series of online interviews. Stakeholders identified as relevant to the evaluation included MRP representatives, CoE representatives, ARP representatives and Scottish Government staff. A set of interview questions was developed for each of these stakeholder types using the evaluation indicators as a guide for what topics to cover. The interview questions captured process, impact, and Covid-19 extension year elements of the evaluation. The study team also developed a participant information sheet and consent form for participants. Final versions of the interview questions, participant information sheet and consent form are provided in the technical annexes.

The study team developed a sampling strategy which was agreed with the Scottish Government. The strategy identified the types of stakeholders whom the evaluation should attempt to interview based on the following criteria:

- Organisation: Scottish Government, Main Research Provider (MRP), Centre of Expertise (CoE), Additional Research Provider (ARP);

- Research theme: 'Food, health and wellbeing'; 'Productive and sustainable land management and rural economies'; and 'Natural assets';

- Type of role: Conducting research; and administration and procurement; and

- Evaluation area: Strategic Research Programme; Underpinning Capacity; CoEs; and Programme extension year.

Initially, the study aimed to conduct 20 interviews. During development of the sampling strategy, it was agreed with the Scottish Government to increase this to 30 to capture more combinations of stakeholder types (e.g. both science advisors and programme managers at the Scottish Government). The Scottish Government subsequently provided around 50% of interviewee contacts and made suggestions as to which individuals would fit the sample. To avoid any bias, the study team identified the remainder of the individuals through reviewing a list of contacts from the Scottish Government and by asking early interviewees for suggestions.

The study team invited potential interviewees to interview by email. The team held 30 online interviews with the breakdown given in Table 3-1. Whilst only one ARP representative was interviewed, this reflects the staffing breakdown of the 2016-22 Programme in that most staff were employed by MRPs, with smaller numbers at CoEs of which a proportion would be based at a Higher Education Institute.

| Type of organisation | Number of interviews held |

|---|---|

| MRP | 18 |

| CoE | 6 |

| ARP | 1 |

| Scottish Government | 5 (2 Programme managers and 3 science advisors) |

| Total | 30 |

All interviewees were provided with a copy of the participant information sheet and consent form. Notes were taken by the study team during the interviews, with recordings used to facilitate this process. After each interview, interviewees were provided with the opportunity to review and edit the meeting minutes.

The study team transferred information from the minutes to a spreadsheet organised by evaluation question. This ensured that all material relevant to any one question was collated in one place for analysis.

3.1.5. Analysis

Programme data

Analysis of Programme data was mainly Excel based, with the aim being to describe the Programme's characteristics and identify trends and patterns in the data on programme inputs (e.g. staff) and outputs (e.g. peer reviewed publications).

Utilising the proforma developed as part of the desktop assessment, three case study projects were identified from the Programme data. The case study projects were selected by the study team to provide examples of the types of projects carried out under the Programme. The selection aimed to pick up a project for each of the three themes and to demonstrate work undertaken by different organisations.

Programme data were also used to determine the potential economic impact of the Programme. A valuation framework was developed, considering economic, environmental and social impacts. Programme outputs were then mapped against these impacts with quantification and monetisation carried out where possible.

Interview data

Interview data was analysed by question, with one member of the evaluation team being responsible for any one question to ensure that all comments and feedback were reviewed consistently. The analysis involved identifying key themes from the data, and determining the strength of the evidence for those themes where possible. This helped to determine whether a comment was an isolated viewpoint or shared by several interviewees.

Data from the interviews were also compared against the Programme data, to see if the situation shown by the Programme data reflected the perceptions of interviewees, and to explore the reasons behind any trends and patterns. Interview data were also used to help identify the impacts of the research undertaken including instances where research had informed policy.

3.1.6. Reporting

This report takes account of both Programme documentation and interview data to evaluate the Programme. The findings section has been organised as follows:

- Assessment of the Programme against the vision and principles in the Research Strategy (objective 5);

- Outputs from the Programme (part of objective 1);

- Impact to Scotland from the research undertaken (objectives 2 and 3); and

- Programme inputs and delivery (objective 4 and part of objective 1).

The evaluation questions have been allocated to the above areas and used to develop the findings.

Contact

Email: socialresearch@gov.scot