The 5 Step Approach to Evaluation: Designing and Evaluating Interventions to Reduce Reoffending

Updated guidance on how to use the 5 Step approach to design and evaluate criminal justice interventions.

Step 4: Monitor your logic model

Use the logic model to agree evaluation questions and indicators

Once the logic model is completed, you need to figure out how you will be able to tell if your model works as predicted, or not. To do this, you should:

1. Agree priority "evaluation questions". Often people jump to data collection before they have decided what they need to find out - this can lead to collecting data that isn't useful which is a waste of resources. Before you think about the data, draft specific questions that you need to answer to test whether the model is working as predicted - see Testing the logic model section for examples. Make sure you don't pose too many questions or try to measure too many outcomes because it is important that the size and cost of the evaluation is proportionate based on the size and cost of the service. Agree on approximately 3-4 questions to focus the evaluation on what is MOST IMPORTANT to know.

Beyond evaluation questions: Developing and testing theories of change

Stating your theories of change goes a step further than evaluation questions.

Every logic model of a service has a theory/theories of change that underpin it even if they're not stated explicitly. A clearly stated theory of change is basically making it absolutely clear why you think your project will lead to outcomes. You then collect data to 'test' whether your theories stand up.

E.g. A project that tries to secure employment for offenders could be based on the following 3 key theories of change -

- Employment should give offenders a sense of accomplishment and purpose which they would be reluctant to lose as a result of further offending

- Employment will provide an income which should mean crimes of dishonesty should decrease

- Employment should help offenders form new relationships will non-offending peers making them less likely to be influenced by others to reoffend

You then collect relevant data to test whether any of these theories held up. As well as numerical data you could survey or interview users who did not reoffend to understand why they desisted from crime. Perhaps none of your theories hold up and they give a completely different reason why they didn't reoffend! Once you know which theory was supported by the evidence, it can inform how your employment project develops.

Use the logic model to identify indicators

2. Identify specific indicators (measures or signals of some kind) that can answer these questions and therefore provide evidence that your model is or isn't working as expected. For example, an offender employment programme could measure some or all of the following;

- User feedback on what an employment programme provided, compared with what was intended

- User feedback on what aspects were most and least useful in helping them find employment

- The number/percentage of users who completed and dropped out the employment programme

- The characteristics (age, gender etc) of users who completed and dropped out of the programme

- The number/percentage of users who gained employment and stayed in work for 6 months or more

- The number and percentage of users who found employment who said they would not go back to offending because it gave them a feeling of accomplishment

- The number of crimes of dishonesty committed by users before and after finding employment

- Perceptions of whether employment helped forge new positive relationships

Warning!

If centrally collected national data is not available, collecting new outcome indicators for large national strategic programmes/reform is not easy. The reality of collecting outcomes data from 1000's of individuals who flow in and out of services and systems across the country can be prohibitively difficult, time-consuming and resource intensive. The following questions need to be addressed:

- What outcomes data is relevant as an indicator of performance?

- Who would the data have to be collected from e.g. prisoners, people on an order, people in services, people back in the community? Is this feasible?

- How is the data going to be collected and how frequently?

- Who is responsible for collecting the data and analysing it?

- Can data be collected and analysed consistently across a range of areas?

- Are outcomes completely within the sphere of influence of the organisation(s) who is being performance managed or do external factors (out of control of the organisation) influence outcomes?

If it is not possible to collect outcomes data, then collecting information on the delivery of activities and outputs (things that organisations should be doing to contribute to achieving outcomes as per the logic model) is advised.

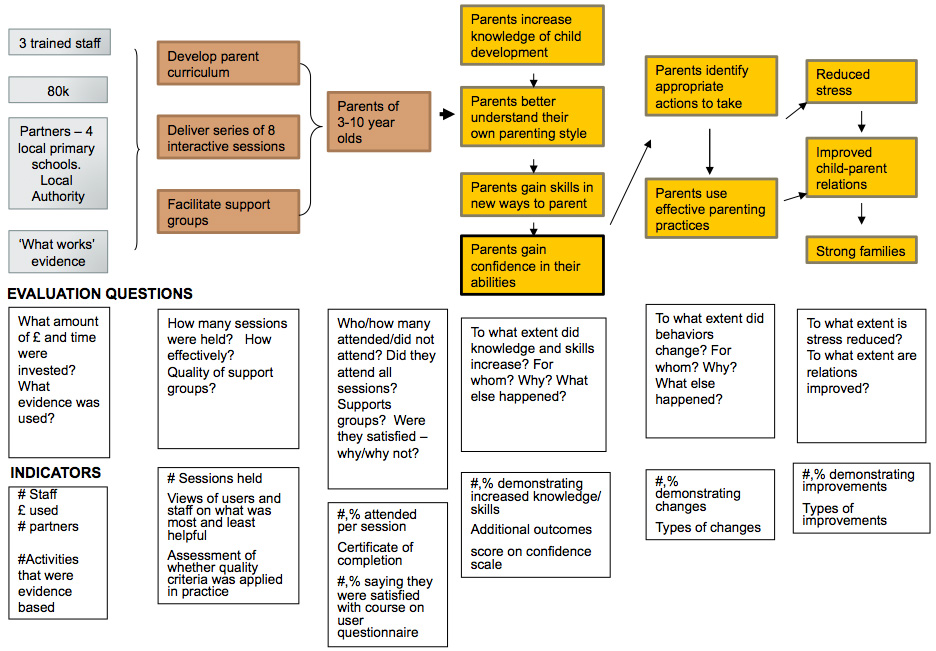

Identify indicators - parenting skills example

Use the logic model to set evaluation questions to identify indicators. This will guide the collection of data: Parenting skills example

Source: University of Wisconsin

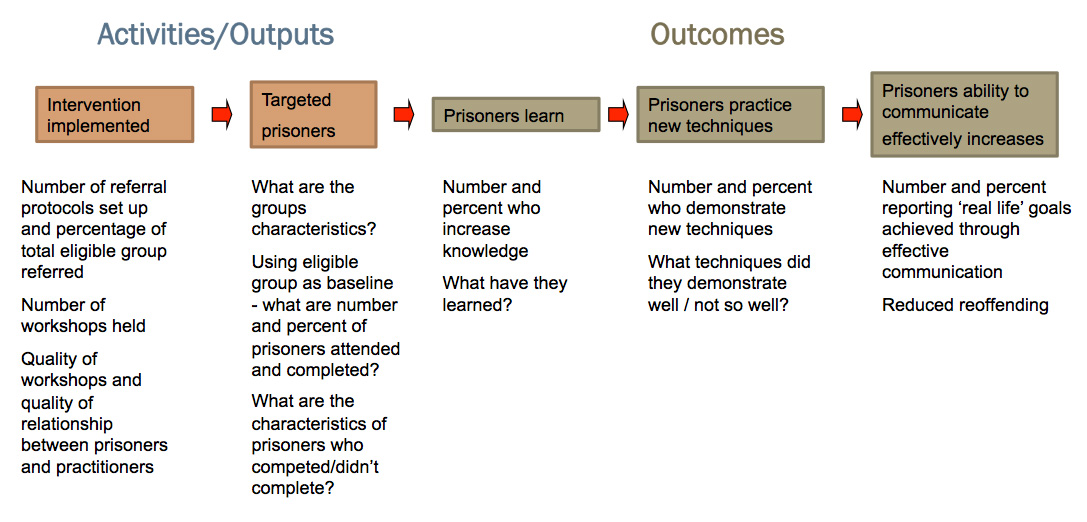

Identify indicators - prisoner skills example

Example indicators for

Activities and

Outcomes:

Prisoner skills example

Data collection principles

Now you've identified your indicators, you need to decide on a way of measuring or observing these things. There are lots of different methods you can use to collect this data but some basic principles to observe are:

- Collect data for every stage of your logic model, including resources and activities as well as outcomes.

- Collect data at a unit level (i.e. about every user of the service) and at an aggregate level (i.e. about the service as a whole). Unit level data can be very useful as it can tell you who the service is working for and who it isn't and you can follow the progress of individuals over time. It can also be combined to give you overall data about your service. But remember, if you only collect aggregate data you will not be able to disaggregate it and therefore collect evidence about particular individuals.

- Follow users through the project You should collect data about users at the very start, throughout and ideally beyond completion of the project. This will enable you to evidence whether users have changed, in terms of their attitudes, behaviour or knowledge.

TIP! Focus on finding indicators that measure the quality of what people do (activities) -unless people deliver a service to a high standard, it is unlikely that outcomes will materialise. Also, if outcomes are hard to measure, focus on quality assurance indictors.

- Make use of numbers and stories. Collect qualitative as well as quantitative evidence. Averages and percentages can help you to assess overall trends and patterns in outcomes for service users. Talking to people, hearing about the views and experience of users and stakeholders will help you to explain these patterns.

- Don't reinvent the wheel. Standardised and validated (pre-tested) tools are available to measure such things as needs, attitudes, motivation, wellbeing and employability. Using these will enhance the reliability of your evidence and save you valuable time. Freely available tools are detailed here:

http://www.clinks.org/sites/default/files/UsingOffShelfToolstoMeasureChange.pdf

http://www.evaluationsupportscotland.org.uk/resources/tools/

http://inspiringimpact.org/resources/

(follow link to "List of Measurement Tools and Systems")

- Be realistic and proportionate. Expensive and/or experimental projects should collect greater amounts of data than well-evidenced and established, cheaper projects. You might want to give questionnaires to all users but it would usually be sensible to carry out in-depth interviews with just a smaller sample of your users as long as they include users who achieved outcomes and those who did not to avoid bias.

Data collection methods

Various methods can be used to collect data in relation to your evaluation questions. Data can be collected from service users, staff or outside agencies. Not all methods will be suitable for all projects. Evaluation Support Scotland have produced excellent guidance on using different approaches.

- Using Interviews and Questionnaires http://www.evaluationsupportscotland.org.uk/resources/129/

- Visual Approaches http://www.evaluationsupportscotland.org.uk/resources/130/

- Using Qualitative Information http://www.evaluationsupportscotland.org.uk/resources/136/

- Using Technology to Evaluate http://www.evaluationsupportscotland.org.uk/resources/131/

More general advice on generating useful evidence can be found in the "Evidence for Success" guide http://www.evaluationsupportscotland.org.uk/resources/270/

TIP! The most rigorous evaluations will be based on data collected using a range of methods

Data capture

You need a way of capturing and storing the data you collect which will make it easy for you to analyse.

- Input data into an Excel spread sheet (or any other database that allows the data to be analysed rather than just recorded).

- Some data could be simply recorded as raw numbers such as costs, number of staff or age.

- Some data might be recorded using drop-down menus. E.g. user characteristics (ethnicity, male/female,) response options in questionnaires or attendance at a particular session.

- Qualitative data (e.g. from interviews and focus groups) may need to be transcribed or recorded via note-taking.

Data analysis

Numerical data or "tick box" answers might be analysed and reported using percentages and/or averages. E.g. "the median (average) age of users was 16" or "80% of users rated the sessions as 'enjoyable' or 'very enjoyable'."

BUT remember to also report actual numbers as well as percentages, especially if you have only a small number of users. It can be misleading to say 66% of users attended a session, if there are only 6 users in total.

Where you have collected qualitative data (e.g. answers to open questions or interviews), go through all of the responses and highlight where common responses have been made by different people. These common responses can be reported as 'themes', to summarise the kinds of things people have said in their answers.

An example data collection framework for a criminal justice intervention

A data collection framework is really useful for evaluators. It is a document, often in the form of a table, clearly setting out:

- What data you will collect in relation to each stage of the logic model

- From whom or what, will you collect your data

- Where and how you will record your data (e.g. on a database)

Appendix 2 shows an example of a fictitious data collection framework for a criminal justice intervention.